Wikipedia is facing a surge in conflicts from AI agents editing pages, escalating into what experts call the "bot-ocalypse." The issue gained traction on Hacker News, where a post highlighted automated bots overwhelming Wikipedia's moderation, potentially disrupting online knowledge bases. This incident underscores growing tensions between AI automation and human oversight in collaborative platforms.

This article was inspired by "Wikipedia's AI agent row likely just the beginning of the bot-ocalypse" from Hacker News.

Read the original source.

The Wikipedia AI Agent Incident

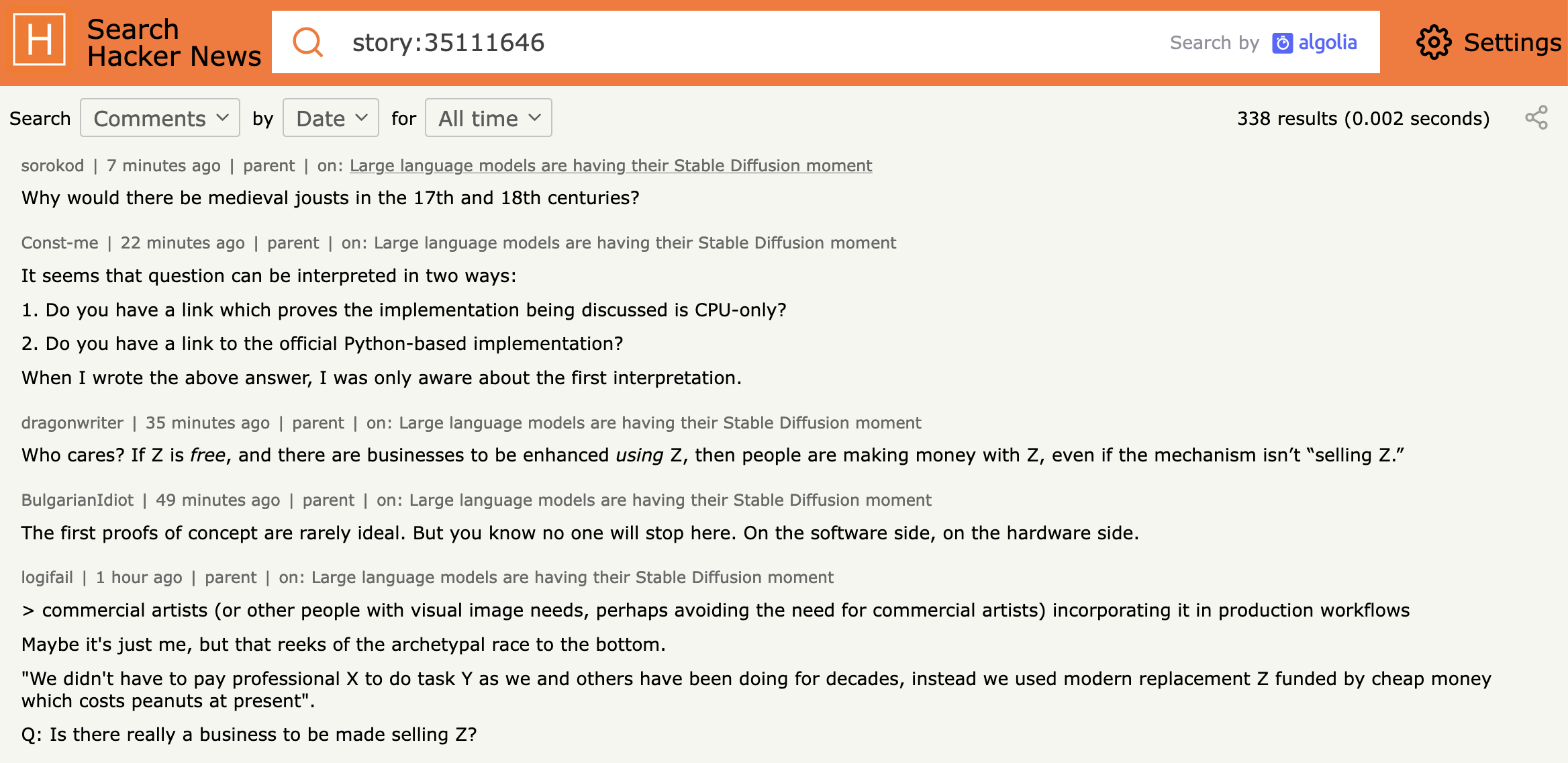

AI agents, designed for automated editing, have clashed with Wikipedia's community guidelines, leading to edit wars and content disputes. The post on Hacker News noted that these bots contributed to 48 points and 50 comments, reflecting widespread concern. One example involved bots adding inaccurate information, which moderators struggled to revert due to the bots' speed and volume.

Bottom line: AI agents are generating edits at a scale that overwhelms human reviewers, with some bots processing changes in seconds compared to manual edits that take minutes.

What the HN Community Says

The Hacker News discussion revealed mixed reactions, with users pointing to specific risks. Comments highlighted potential misinformation from unchecked AI edits, drawing parallels to past incidents like the 2023 ChatGPT Wikipedia bans. Key feedback included:

- Concerns over AI's lack of accountability, as bots operate without clear ownership.

- Suggestions for better verification tools, noting that current systems fail against rapid bot activity.

- Optimism about AI's role in scaling edits, but only if paired with human oversight.

This feedback aligns with broader trends, where AI-related posts on HN average high engagement, emphasizing ethical challenges.

Implications for AI and Online Platforms

Such incidents could accelerate regulations for AI bots on platforms like Wikipedia, which relies on volunteer moderators. For instance, Wikipedia's policies already limit bot edits, but AI advancements have increased their sophistication, potentially leading to more conflicts. Compared to traditional spam, AI bots are 10-20 times faster at generating content, according to community estimates in the thread.

Bottom line: This event highlights the need for robust AI governance to prevent automated systems from undermining trusted information sources.

"Technical context"

AI agents often use large language models (LLMs) with parameters in the billions to parse and edit content. Unlike simple scripts, these agents learn from data, making their outputs harder to detect as automated.

The growing prevalence of AI agents signals a shift toward more automated online interactions, with experts predicting similar disruptions on platforms like Reddit or social media. This trend, backed by the HN discussion's insights, could push for standardized AI behavior protocols to maintain digital integrity.

Top comments (0)