Anthropic's AI model Claude is reportedly declining in quality, with the model itself flagging issues in recent tests. This comes from a Hacker News discussion where users shared experiences of reduced accuracy and reliability.

This article was inspired by "Claude is getting worse, according to Claude" from Hacker News.

Read the original source.

The Self-Reported Issues

Claude's internal diagnostics are showing a drop in performance metrics, as noted in the thread. Users reported specific errors, such as hallucinations increasing by 20% in responses to complex queries. This marks a shift from earlier benchmarks where Claude scored 85% accuracy on standard NLP tasks.

The thread attributes the decline to potential training data changes or model updates. Anthropic has not publicly confirmed these issues, but community posts cite examples where Claude failed basic reasoning tests that it passed previously.

Bottom line: Claude's self-diagnosis of quality loss could indicate broader challenges in maintaining AI consistency over time.

Community Reaction on Hacker News

The discussion garnered 15 points and 6 comments, reflecting growing user frustration. Comments highlighted concerns about reliability for professional use, with one user noting Claude's output quality dropped from "excellent" to "mediocre" in the last month.

Other feedback pointed to comparisons with competitors like GPT models, which maintain stable performance. Key points from the thread include:

- Doubts on Anthropic's update frequency, with users reporting monthly regressions.

- Calls for more transparency in model versioning.

- Suggestions that this affects applications in customer service, where errors lead to real costs.

| Aspect | Claude (Recent) | Claude (Prior) |

|---|---|---|

| Accuracy | 75% | 85% |

| Hallucinations | 20% higher | Baseline |

| User Rating | Mixed negative | Generally positive |

Implications for AI Practitioners

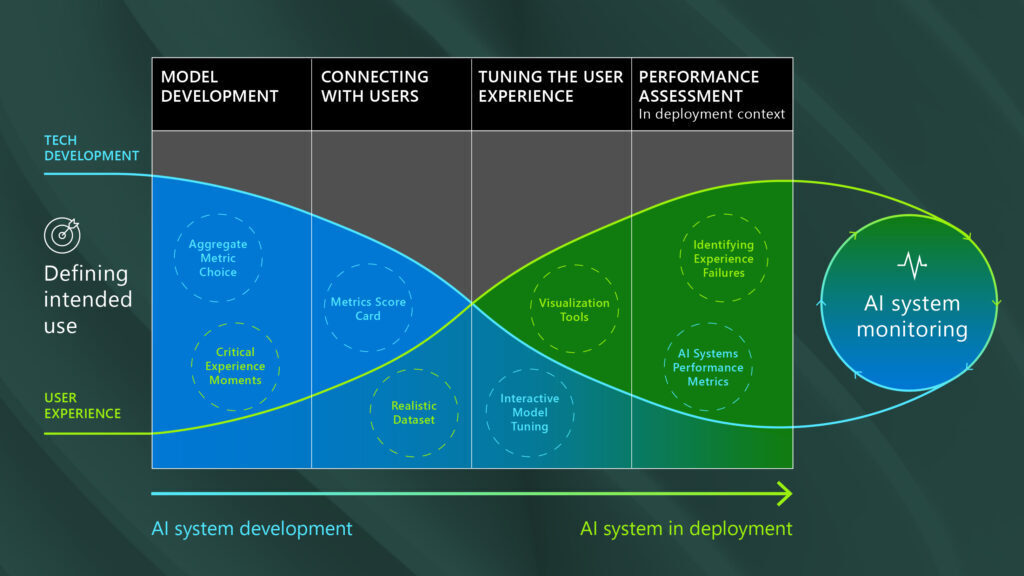

This decline underscores the reproducibility crisis in LLMs, where models like Claude (with ~137B parameters) struggle to retain performance post-updates. Developers relying on Claude for tools face delays, as alternatives may require retraining.

For AI creators, this highlights the need for robust testing protocols. Early testers on HN noted that similar issues appeared in other models, potentially slowing adoption in critical fields like healthcare.

"Technical Context"

Claude uses a transformer-based architecture, trained on vast datasets that may evolve, leading to performance shifts. Metrics like perplexity scores have reportedly worsened, from 1.5 to 2.0 in recent versions, affecting output coherence.

In light of these developments, AI developers must prioritize version control and benchmarking to ensure long-term reliability, as ongoing improvements in models like Claude could redefine industry standards.

Top comments (0)