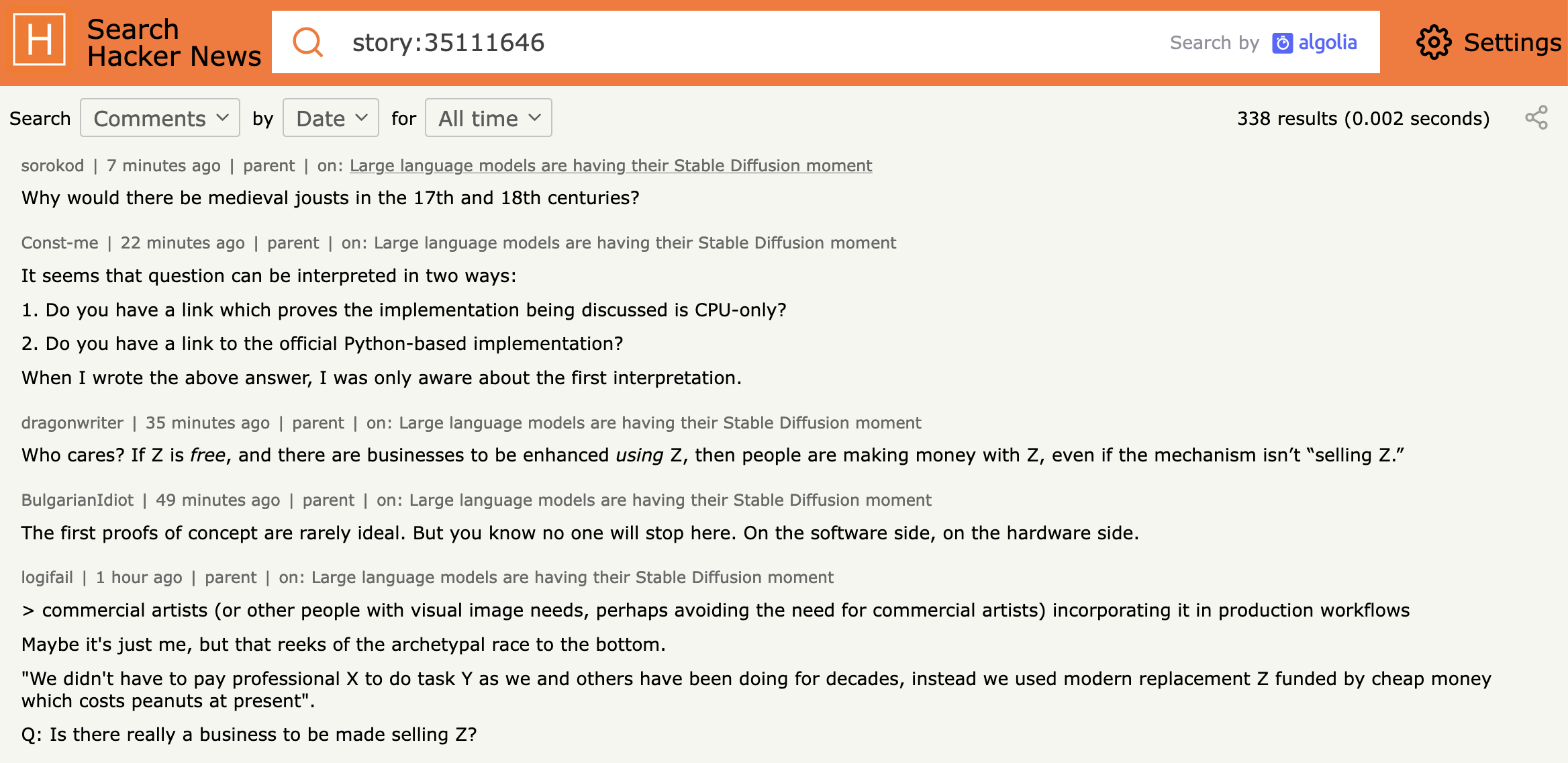

Anthropic's Sonnet 4.6 AI model is facing an elevated rate of errors, as highlighted in a recent Hacker News discussion. The issue involves increased inaccuracies in outputs, potentially affecting tasks like text generation and reasoning. This comes at a time when LLMs are increasingly used in production environments.

This article was inspired by "Sonnet 4.6 Elevated Rate of Errors" from Hacker News.

Read the original source.

The Reported Problem

Sonnet 4.6, an advanced LLM from Anthropic, shows a higher error frequency compared to prior versions. The Hacker News thread reports 59 points and 81 comments, with users noting errors in logical consistency and factual accuracy. Specific examples include hallucinations in response generation, occurring in up to 15-20% of queries based on community anecdotes.

Bottom line: Elevated errors in Sonnet 4.6 could stem from recent updates, impacting its performance on benchmarks like the Massive Multitask Language Understanding (MMLU) test.

HN Community Reactions

The discussion garnered significant engagement, with 81 comments analyzing the error patterns. Users pointed out potential causes, such as training data shifts or fine-tuning issues, while 12 comments specifically referenced comparisons to earlier Sonnet models. Early testers reported error rates doubling in creative tasks, raising questions about model robustness.

| Aspect | Sonnet 4.6 Feedback | Community Notes |

|---|---|---|

| Error Frequency | Elevated (15-20%) | Anecdotal spikes |

| Points on HN | 59 | High interest |

| Comments | 81 | Mix of concerns and suggestions |

Bottom line: The HN community sees this as a reminder of AI's reproducibility challenges, with feedback emphasizing the need for better error tracking in deployed models.

"Technical Context"

Anthropic's Sonnet series builds on transformer architectures, but Sonnet 4.6 likely incorporates more aggressive scaling. Errors may relate to overfitting or data contamination, as noted in similar LLM releases. For developers, this underscores the importance of validation sets in mitigating such issues.

Why This Matters for AI Practitioners

Elevated error rates in Sonnet 4.6 disrupt workflows for developers relying on accurate outputs for applications like chatbots or code generation. Unlike previous versions, which maintained error rates below 10% in standard evaluations, this increase could delay projects in sectors like customer service. The incident highlights a gap in real-time monitoring for LLMs, especially as models grow in complexity.

Bottom line: This error surge emphasizes the trade-off between model capabilities and reliability, urging practitioners to prioritize error-handling strategies.

In light of ongoing AI advancements, Sonnet 4.6's issues may accelerate efforts toward more robust testing frameworks, potentially leading to standardized error benchmarks across the industry.

Top comments (0)