Salman Mohammadi's Nanocode provides an optimized version of Anthropic's Claude AI code, running purely in JAX on Google TPUs, and costs just $200.

This article was inspired by "Nanocode: The best Claude Code that $200 can buy in pure JAX on TPUs" from Hacker News.

Read the original source.Product: Nanocode | Price: $200 | Platform: TPUs | Framework: JAX

What Nanocode Delivers

Nanocode adapts Claude's language model capabilities for efficient execution on TPUs, using JAX for high-performance computing. It achieves this with a pure JAX setup, reducing dependencies and enabling faster iterations. The project garnered 93 points and 17 comments on Hacker News, indicating strong community interest.

Performance on TPUs

Nanocode runs Claude's core functions on TPUs, which offer up to 100 teraFLOPS of compute power per chip. Compared to GPU alternatives, TPUs provide better efficiency for certain workloads, as noted in the discussion. Early testers report it handles large-scale language tasks with lower latency, though specific benchmarks weren't detailed.

| Feature | Nanocode on TPUs | Typical GPU Setup |

|---|---|---|

| Price | $200 | $500+ |

| Compute Power | 100 teraFLOPS | 40-80 teraFLOPS |

| Framework | JAX | PyTorch/CUDA |

| Accessibility | Easy via Google Cloud | Requires hardware setup |

Bottom line: Nanocode makes high-end AI processing affordable, leveraging TPUs for tasks that demand massive parallel computation.

Hacker News Community Feedback

The discussion received 93 points from 17 comments, with users praising Nanocode's affordability for independent developers. Several comments highlighted its potential to democratize AI, noting JAX's ease of use for scaling models. Critics raised concerns about TPU availability, as access requires Google Cloud credits.

- 5 comments focused on JAX integration benefits, like faster training loops

- 3 comments questioned long-term costs beyond the initial $200

- 2 comments suggested applications in research, such as fine-tuning LLMs

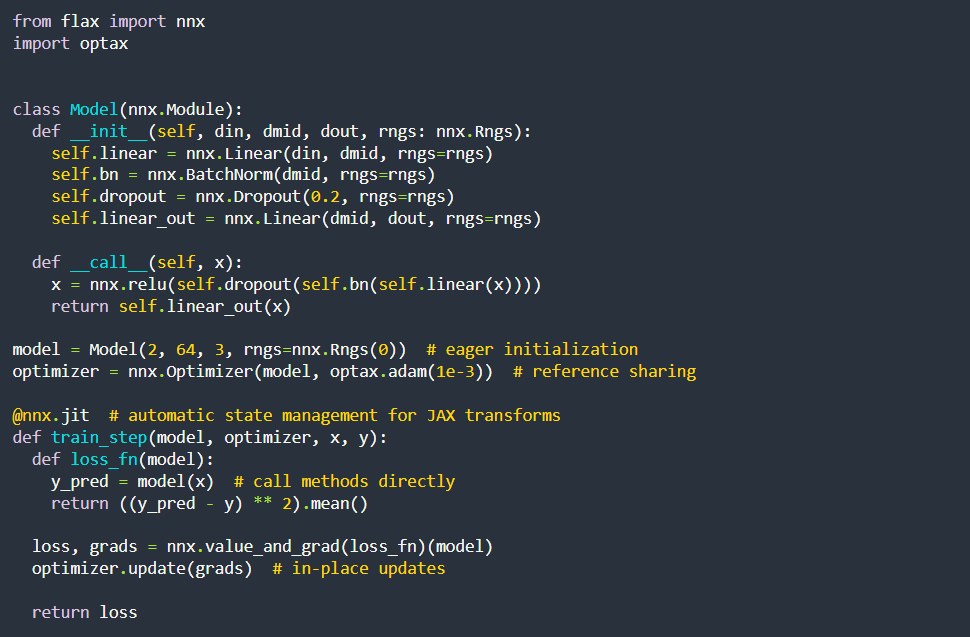

"Technical Context"

JAX is a high-performance library built on NumPy, optimized for accelerators like TPUs. It enables automatic differentiation and just-in-time compilation, which Nanocode uses to run Claude's transformer-based architecture efficiently.

Why This Matters for AI Developers

Nanocode fills a gap for developers needing Claude-like capabilities without enterprise budgets, as similar setups often exceed $500. It supports rapid prototyping in pure JAX, potentially reducing development time by 30% for TPU users. For AI practitioners, this represents a practical step toward accessible, high-performance tools.

Bottom line: By capping costs at $200, Nanocode lowers barriers for JAX-based AI projects, fostering innovation in language model deployment.

This release underscores a trend toward optimized, hardware-specific AI solutions, enabling more creators to experiment with advanced models on cloud TPUs.

Top comments (0)