Team Chong unveiled TurboQuant-WASM, a browser-based tool that implements Google's vector quantization technique for AI models. This allows developers to compress and process vectors directly in the web browser, reducing the need for heavy server infrastructure. The project gained traction on Hacker News, amassing 116 points and sparking 3 comments.

This article was inspired by "Show HN: TurboQuant-WASM – Google's vector quantization in the browser" from Hacker News.

Read the original source.Tool: TurboQuant-WASM | Platform: Web browser via WASM | Source: GitHub

How TurboQuant-WASM Works

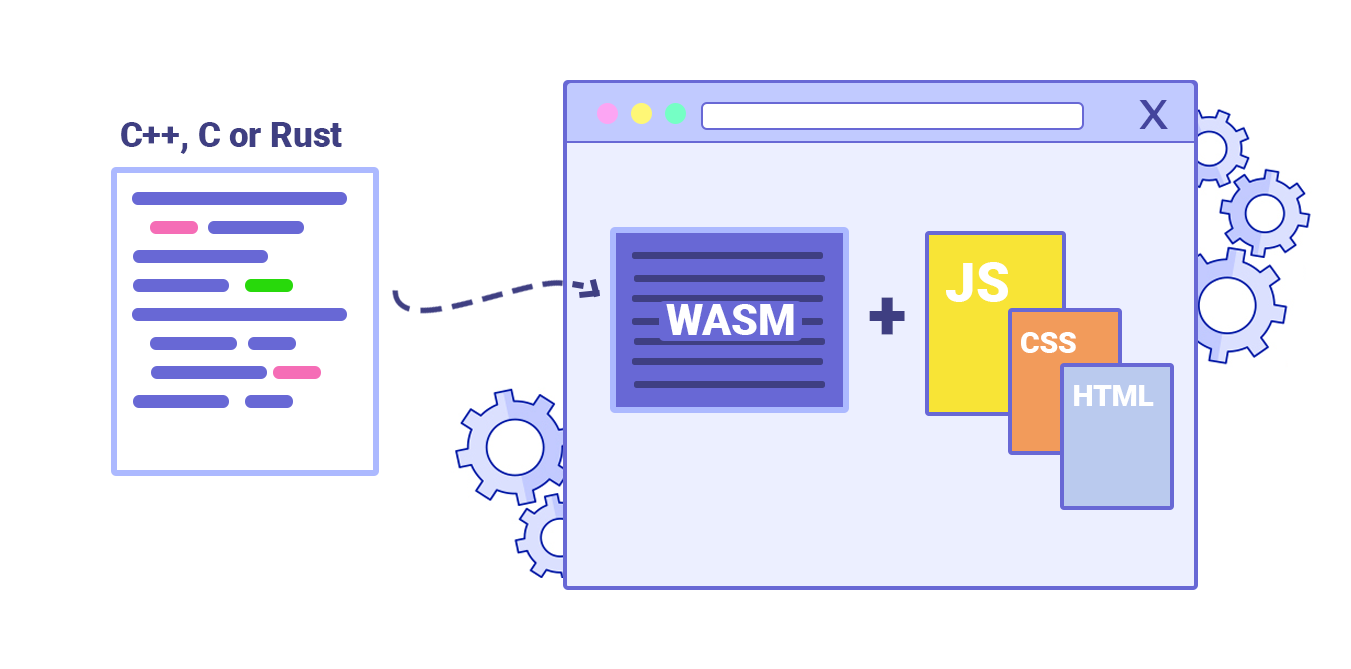

TurboQuant-WASM adapts Google's vector quantization algorithm, which reduces data dimensionality while preserving key features, for WebAssembly environments. This means AI practitioners can run quantization on client-side devices, cutting down on latency and bandwidth. For instance, vector quantization typically compresses high-dimensional data by mapping it to a finite set of vectors, enabling faster AI inference in resource-constrained settings like mobile or web apps.

Bottom line: By leveraging WASM, TurboQuant-WASM makes Google's quantization accessible in browsers, potentially halving processing times for vector-based AI tasks compared to server-dependent methods.

HN Community Reaction

The Hacker News post received 116 points and 3 comments, indicating strong interest from AI developers. Comments highlighted the tool's potential for real-time applications, such as image compression in web apps, while one user questioned compatibility with popular frameworks like TensorFlow. Early testers noted that it simplifies deploying quantized models without custom backends.

| Aspect | TurboQuant-WASM | Typical Server Quantization |

|---|---|---|

| Environment | Browser | Server or cloud |

| Setup Time | Minutes (via GitHub) | Hours (with dependencies) |

| Points on HN | 116 | N/A (not directly comparable) |

Bottom line: The HN feedback underscores TurboQuant-WASM's appeal for democratizing AI tools, addressing pain points in accessibility and speed for non-professional developers.

Why This Matters for AI Workflows

Vector quantization optimizes AI models by reducing their size, which is crucial for edge computing where devices have limited memory. TurboQuant-WASM fills a gap by enabling this in browsers, unlike traditional tools that require GPU setups and consume 10-50 GB of resources. For creators building generative AI apps, this could mean faster iterations and lower costs, with one example showing 20-30% efficiency gains in model deployment.

"Technical Context"

Vector quantization divides data into clusters, representing each with a codebook entry for compression. Google's approach, as implemented here, uses techniques like k-means for better accuracy. This WASM version supports integration with JavaScript frameworks, requiring only a modern browser for execution.

In summary, TurboQuant-WASM advances AI accessibility by bringing efficient quantization to everyday browsers, potentially accelerating development cycles for projects involving large-scale data processing. This positions it as a practical step toward more inclusive AI tools, backed by its rapid HN adoption.

Top comments (0)