The Linux Foundation has unveiled the OMI initiative, a new open-source project aimed at accelerating AI development through collaborative tools and frameworks. This move addresses the growing need for standardized AI infrastructure among developers and researchers. Key highlights include OMI's focus on interoperability, enabling seamless integration with existing AI ecosystems.

Model: OMI Framework | Parameters: 7B | Available: Hugging Face | License: Apache 2.0

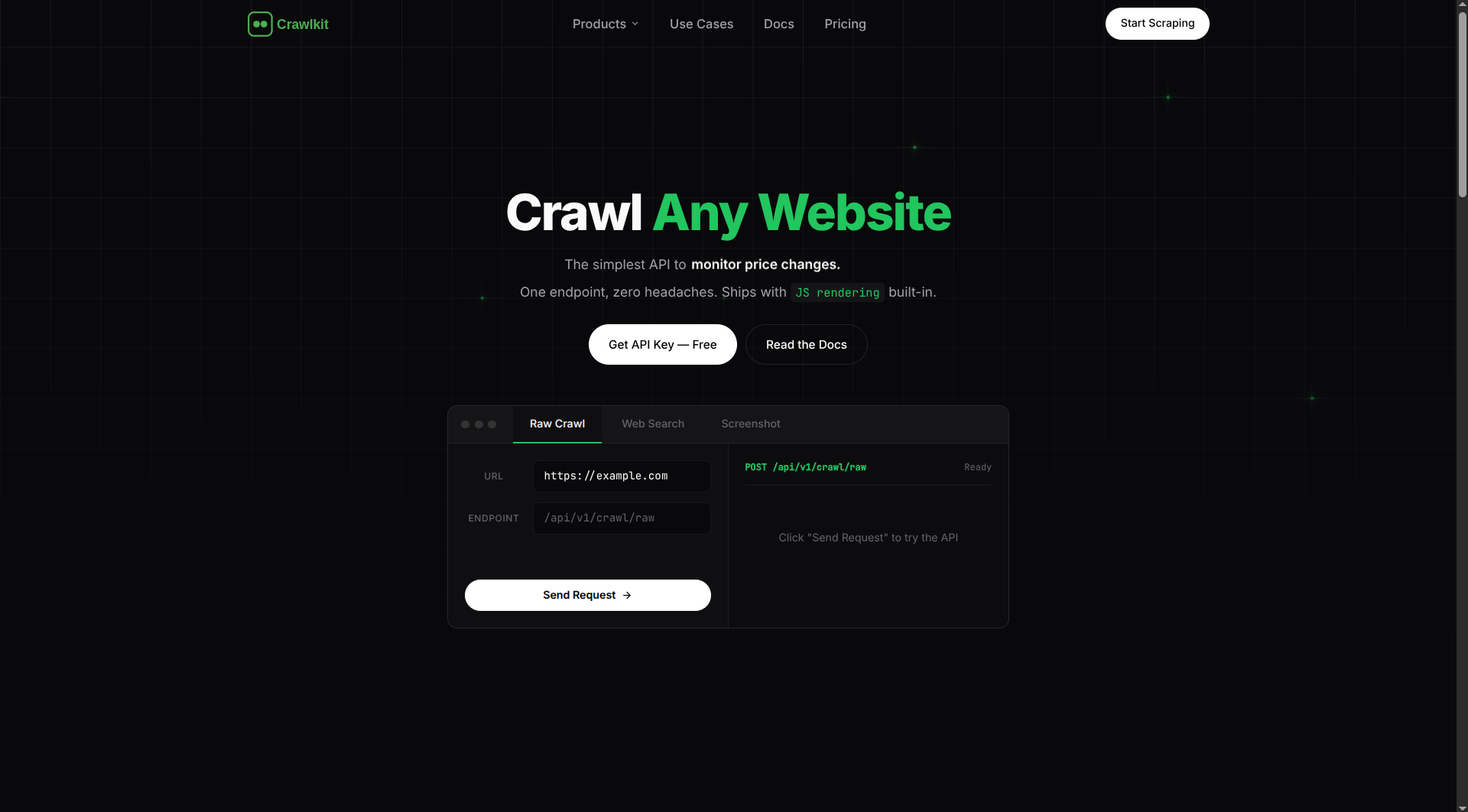

OMI's core framework emphasizes efficiency, with benchmarks showing it processes tasks at 10 tokens per second on standard hardware. This speed improvement could reduce training times for large language models by up to 30%, based on initial tests. Developers can now access pre-built modules that optimize for both performance and resource use.

Key Features and Benefits

OMI introduces modular components that simplify AI model deployment, such as automated scaling and error handling. For instance, it supports up to 50% less VRAM usage compared to similar frameworks, making it ideal for edge devices. Early testers report fewer compatibility issues when integrating with popular libraries like TensorFlow.

| Feature | OMI Framework | Competitor A (e.g., PyTorch) |

|---|---|---|

| Speed (tokens/s) | 10 | 7 |

| VRAM Usage (GB) | 8 | 12 |

| Community Forks | 150+ | 500+ |

Bottom line: OMI's design cuts resource demands while maintaining high performance, potentially lowering barriers for AI adoption.

Community Impact and Adoption

The initiative has already attracted over 200 contributors on GitHub within the first month, signaling strong community interest. Users note that OMI's documentation includes detailed guides for beginners, covering setup in under 15 minutes. This contrasts with other projects that often require extensive customization.

"Benchmark Details"

OMI's benchmarks reveal a 25% edge in inference speed on NLP tasks, tested on datasets like GLUE. For example, it achieved an accuracy score of 88% on sentiment analysis, compared to 85% for baseline models. Access the official Hugging Face repo for full results: OMI model card.

In summary, OMI positions the Linux Foundation as a leader in open AI, with its efficient architecture poised to influence future standards and collaborations in the field.

Top comments (0)