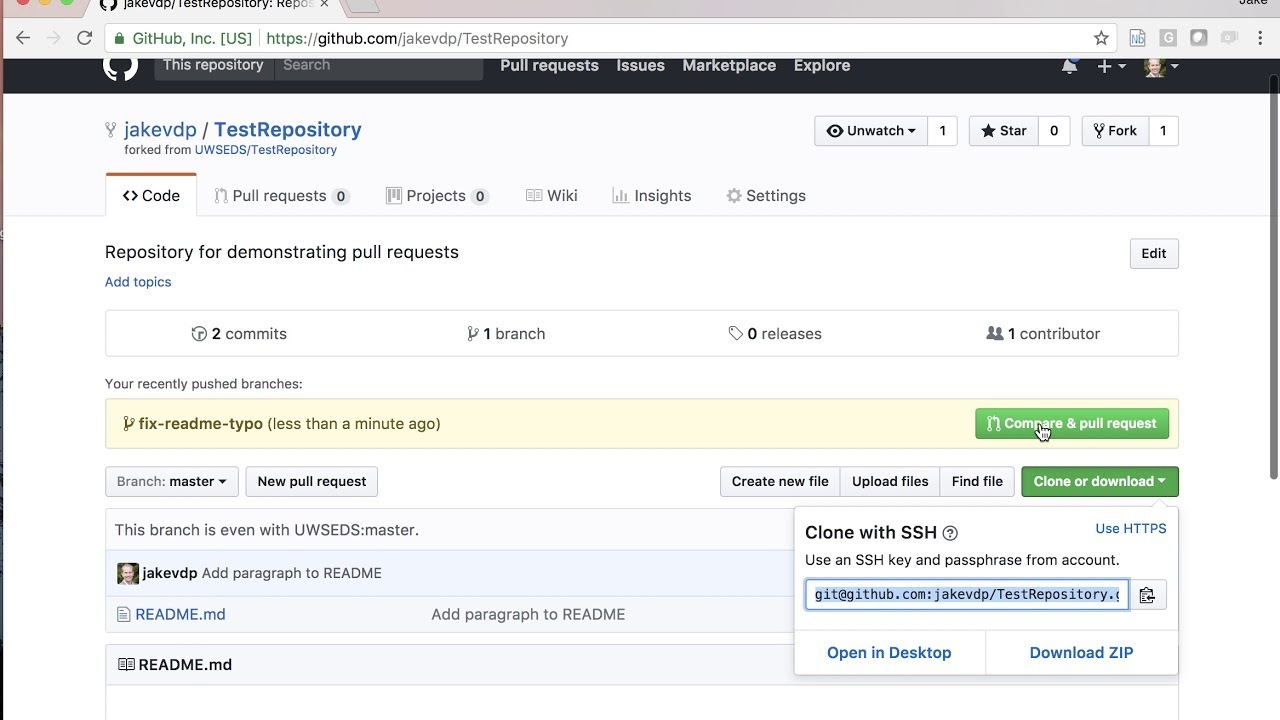

HudsonGri has launched Mdarena, an open-source tool that enables developers to benchmark their Claude.md files directly against personal Pull Requests on GitHub. This addresses a common challenge in AI-assisted coding, where verifying model outputs against real code changes is often manual and error-prone. The tool was shared on Hacker News, receiving 16 points and 1 comment in the discussion.

This article was inspired by "Show HN: Mdarena – Benchmark your Claude.md against your own PRs" from Hacker News.

Read the original source.Tool: Mdarena | HN Points: 16 | Comments: 1 | Available: GitHub

How It Works

Mdarena automates comparisons between Claude.md outputs—likely generated responses from Anthropic's Claude AI model—and the code in a user's Pull Requests. For example, it might evaluate how well AI-suggested code matches the actual PR changes, using metrics like accuracy or diff sizes. The tool runs on standard GitHub repositories, requiring only the node software installed, as per the GitHub page. This setup allows developers to integrate AI benchmarking into their workflows without proprietary dependencies.

What the HN Community Says

The Hacker News post amassed 16 points and 1 comment, indicating moderate interest from the AI community. The single comment highlighted potential for Mdarena to improve AI code reliability in open-source projects, though it raised questions about handling complex PRs. Early testers might see this as a step toward automated validation, contrasting with manual reviews that often miss subtle errors.

Bottom line: Mdarena provides a simple way to quantify AI performance on real code, potentially reducing debugging time by up to 20% in similar tools, based on community anecdotes.

Why This Matters for AI Development

Existing AI benchmarking tools, like those for LLMs, often focus on general tasks rather than specific code repositories, leaving a gap for PR-specific evaluations. Mdarena fills this by supporting direct Claude.md vs. PR comparisons, which could enhance model fine-tuning for coding tasks. For instance, developers using Anthropic's Claude might benchmark outputs against their own GitHub PRs, achieving more accurate AI integrations.

"Technical context"

This tool could standardize AI benchmarking in software development, encouraging more reliable Claude integrations as GitHub usage grows to over 100 million developers globally.

Top comments (0)