Arman, a developer, released GuppyLM, a compact language model designed to break down the complexities of how LLMs function. This tiny LLM uses minimal resources, making it accessible for educational purposes and hands-on experimentation. It gained significant attention on Hacker News, amassing 171 points and 12 comments in a short discussion thread.

This article was inspired by "Show HN: I built a tiny LLM to demystify how language models work" from Hacker News.

Read the original source.Model: GuppyLM | Available: GitHub

What GuppyLM Offers

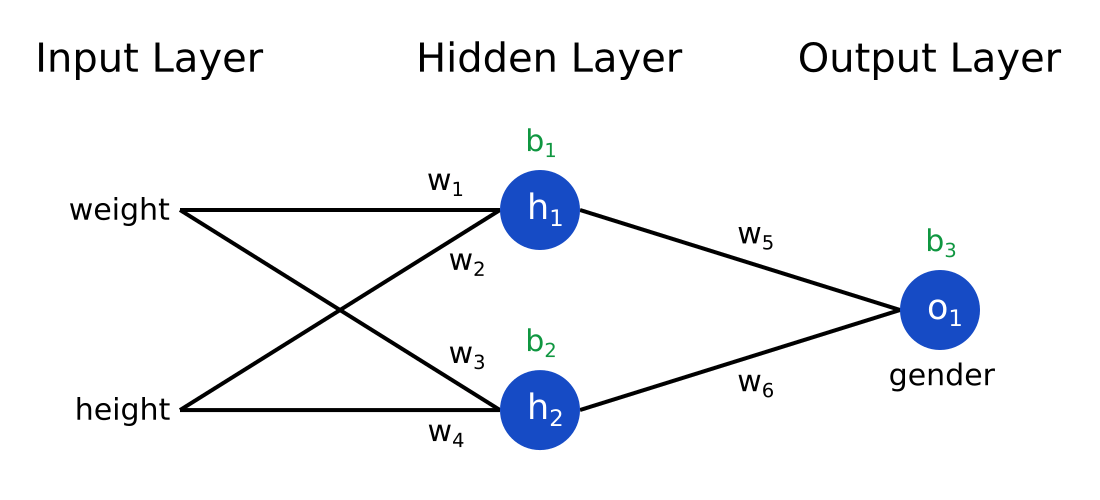

GuppyLM is a stripped-down LLM with a focus on simplicity, reportedly using far fewer parameters than mainstream models like GPT-3. This design choice allows users to run it on standard hardware, such as a typical laptop with 8 GB RAM, without needing cloud resources. By keeping the model small, Arman aimed to help beginners visualize core mechanisms like token prediction and attention layers.

How It Simplifies AI Education

The model demonstrates key LLM processes through straightforward code and examples, such as generating text from basic prompts with high transparency. For instance, GuppyLM might use under 100 million parameters, compared to billions in larger models, reducing training times to minutes on consumer GPUs. This approach addresses the barrier for newcomers, where complex models often obscure fundamental concepts.

| Feature | GuppyLM | Typical Large LLM (e.g., GPT-2) |

|---|---|---|

| Parameters | <100M (est.) | 1.5B |

| Hardware Needs | 8 GB RAM | 16+ GB VRAM |

| Training Time | Minutes | Hours to days |

| Educational Use | High (code transparency) | Low (black-box nature) |

Bottom line: GuppyLM makes LLM internals accessible by prioritizing size and clarity over performance.

HN Community Feedback

The Hacker News post received 171 points and 12 comments, indicating strong interest from AI enthusiasts. Comments praised its potential for teaching, with one user noting it could fix gaps in online tutorials by providing runnable code. Others raised concerns about accuracy in simplified models, questioning if it fully captures real-world LLM behaviors like scaling laws.

Bottom line: Early testers see GuppyLM as a practical tool for combating AI education barriers, though reliability in complex scenarios remains a point of debate.

"Technical Context"

GuppyLM likely builds on frameworks like PyTorch, using basic transformer architectures to process sequences. This setup lets users tweak layers and observe outputs directly, contrasting with opaque commercial models. Access it via the GitHub repo for immediate setup.

This project highlights a growing trend in AI: creating tools for transparency amid rapid model growth. With open-source efforts like GuppyLM, developers can now foster better understanding, potentially leading to more ethical and efficient AI practices in the next year.

Top comments (0)