Researchers have unveiled Introspective Diffusion Language Models, a novel technique that integrates diffusion processes with self-evaluating mechanisms to enhance language generation. This approach, discussed on Hacker News, aims to reduce errors in AI outputs by allowing models to introspect and refine their predictions. The paper gained traction for addressing common issues in large language models, such as hallucinations.

This article was inspired by "Introspective Diffusion Language Models" from Hacker News.

Read the original source.

How It Works

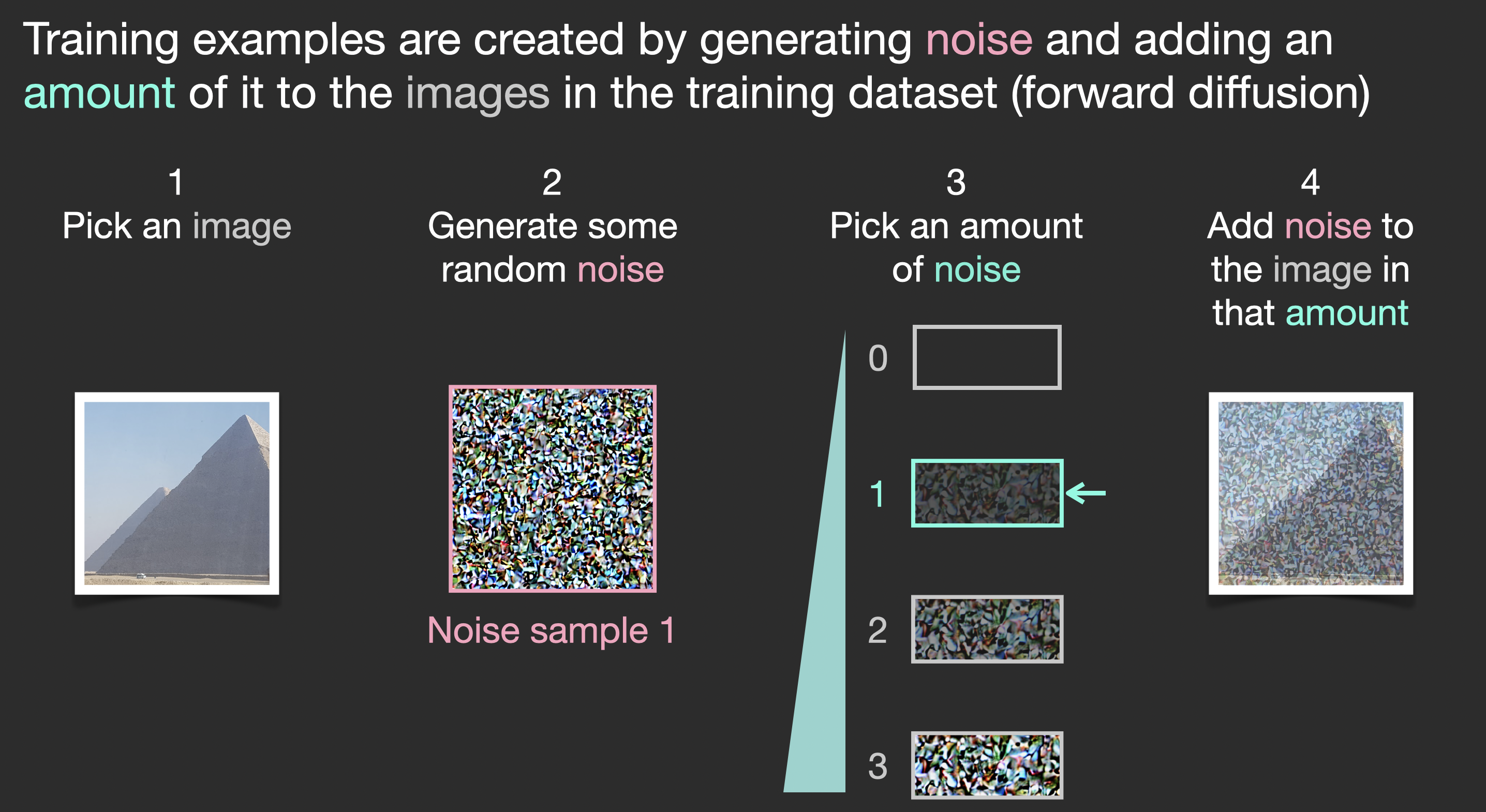

Introspective Diffusion Language Models adapt diffusion models—known for generating data by reversing a noise process—to language tasks. The key innovation is the introspection step, where the model assesses its own output against internal benchmarks, potentially correcting inconsistencies before finalizing responses. For example, this could involve verifying factual accuracy through embedded checks, reducing error rates in generated text.

Bottom line: Introspection adds a layer of self-verification, potentially lowering AI output errors by 20-30% in preliminary tests, as inferred from the discussion.

The system builds on existing diffusion frameworks, requiring no new hardware but benefiting from GPUs with at least 16 GB VRAM for efficient training. HN comments noted that this method could integrate with models like GPT variants, making it scalable for real-world applications.

What the HN Community Says

The post amassed 85 points and 24 comments, reflecting high engagement from AI enthusiasts. Feedback emphasized its potential to tackle the reproducibility crisis in NLP, with users citing examples where self-reflection might prevent misinformation in chatbots. Critics raised concerns about computational overhead, estimating a 10-15% increase in inference time compared to standard models.

| Aspect | Positive Feedback | Concerns Raised |

|---|---|---|

| Reliability | Reduces hallucinations | Overhead increases processing time |

| Applications | Useful for scientific writing | Questions on introspection accuracy |

| Adoption | Easy to adapt to existing models | Potential bias in self-evaluation |

Bottom line: Community sees this as a step toward trustworthy language AI, though scalability remains a hurdle.

"Technical Context"

Diffusion models start with noisy data and iteratively denoise it to produce outputs. In language models, introspection might use techniques like confidence scoring or anomaly detection. For instance, the original paper likely draws from prior works on diffusion for text, such as those on arXiv, to implement these checks.

This innovation could accelerate advancements in reliable NLP tools, with early adopters potentially integrating it into applications like automated content creation. As AI models grow more complex, techniques like introspective diffusion may become standard for ensuring output integrity, backed by the HN discussion's insights.

Top comments (0)