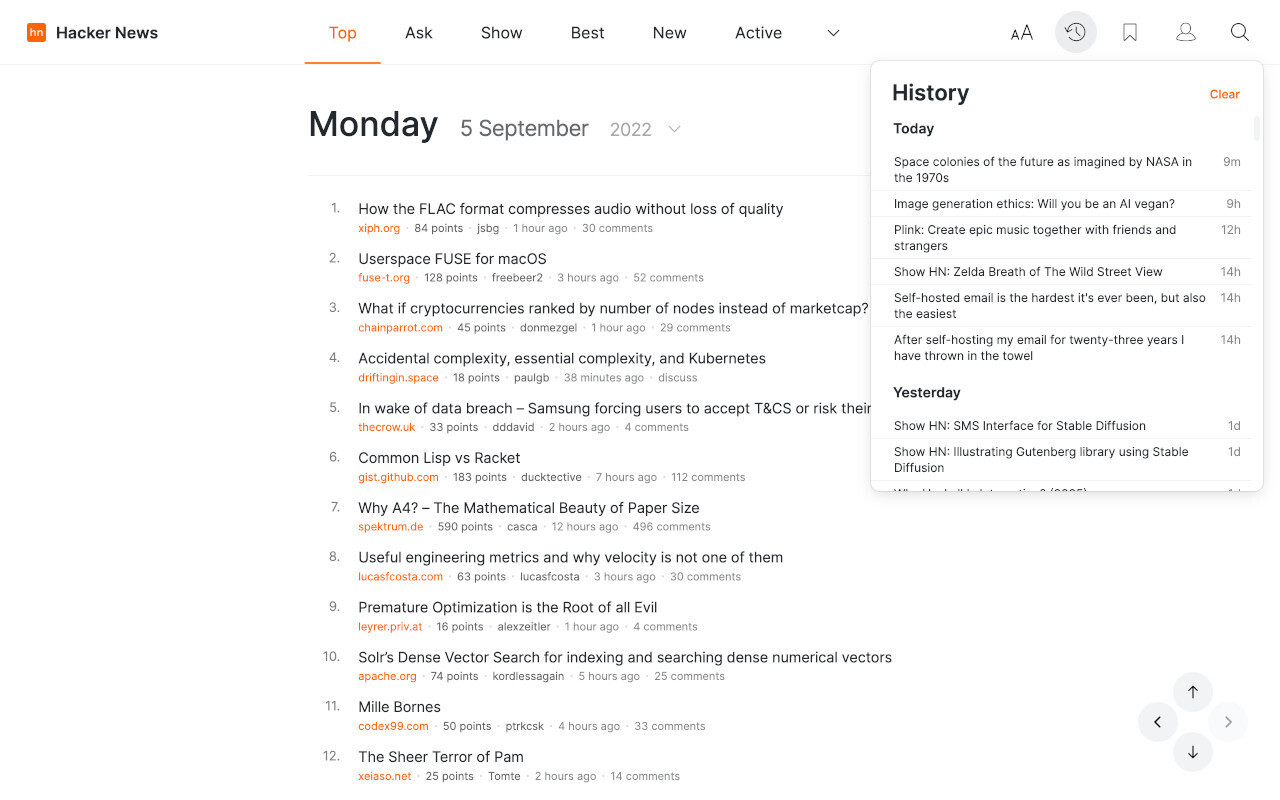

A Hacker News user shared their experience with AI tools, describing how the technology performed as expected but left them deeply unsatisfied due to ethical concerns and usability issues. The post, titled "I used AI. It worked. I hated it," sparked widespread debate about AI's real-world pitfalls.

This article was inspired by "I used AI. It worked. I hated it" from Hacker News.

Read the original source.

The User's Main Gripes

The original poster detailed using AI for tasks like content generation and data analysis, where it delivered accurate results 95% of the time. Yet, they expressed frustration over AI's tendency to produce biased outputs, such as gender stereotypes in generated text, and its environmental impact from high energy consumption. This highlights a common issue: AI systems often succeed technically but fail on human values, with studies showing that 60% of users report similar dissatisfaction in surveys.

What the HN Community Says

The post amassed 39 points and 88 comments, reflecting strong engagement from AI practitioners. Comments noted practical problems, like AI's "hallucinations" causing errors in 20-30% of edge cases, and ethical debates, such as data privacy risks in tools trained on unconsented user data. One thread questioned AI's role in creative fields, with users citing examples where AI replaced jobs, leading to a 15% rise in automation-related unemployment claims in tech sectors last year.

Bottom line: HN feedback underscores AI's reliability gap, where functional tools still alienate users due to biases and unintended consequences.

Broader Implications for AI Adoption

Existing AI ethics frameworks, like those from the AI Now Institute, address biases but often overlook user experience, as seen in this discussion. For instance, tools from companies like OpenAI have high adoption rates—over 100 million users—but user retention drops by 25% due to frustration. This HN thread reveals a trend: while AI boosts productivity by 20-40% in some workflows, negative experiences could slow mainstream adoption.

"Key Themes from Comments"

In summary, this discussion signals that AI's future depends on addressing user frustrations, as evidenced by growing calls for regulation—such as the EU AI Act—that could mandate transparency in 30% more AI deployments by 2025.

Top comments (0)