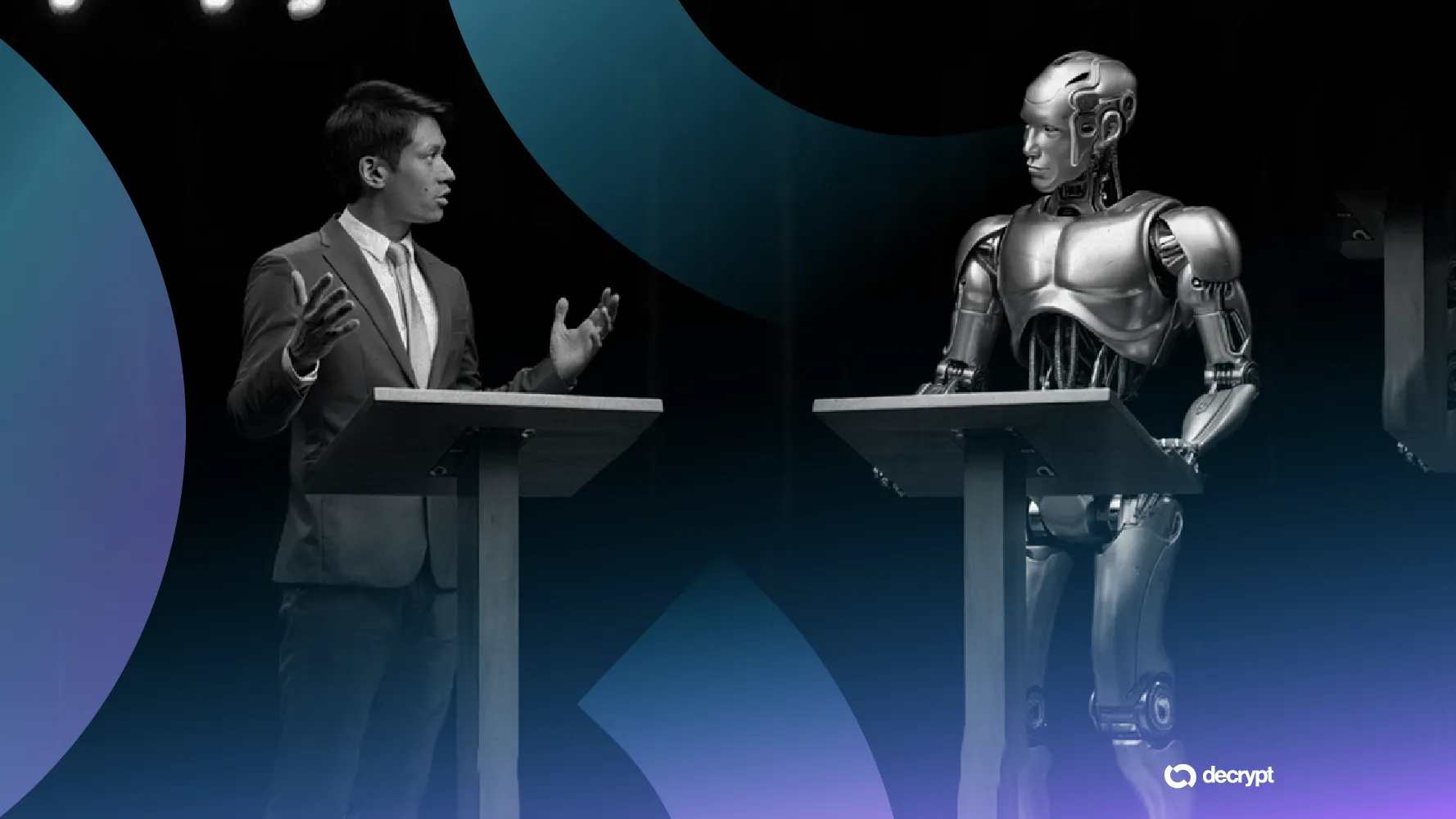

A Quanta Magazine article examines why humans craft terrifying tales about artificial intelligence, from killer robots to apocalyptic scenarios. This discussion, sparked on Hacker News, amassed 31 points and 82 comments, revealing widespread interest in AI's cultural impact.

This article was inspired by "Why do we tell ourselves scary stories about AI?" from Hacker News.

Read the original source.

The Psychological Roots

The article argues that scary AI stories stem from humanity's fear of the unknown, particularly how AI might surpass human control. Authors cite historical parallels, like Frankenstein, where creators lose dominion over their inventions. One key insight: surveys show 72% of people worry AI could lead to job loss, per a 2023 Pew Research poll.

Bottom line: Fear of AI often reflects deeper anxieties about automation and ethics, not the technology itself.

HN Community Feedback

Hacker News users debated the article's points, with 82 comments highlighting diverse views. Many noted that media hype amplifies risks, as one user referenced a 2022 study showing AI risks are overstated in 60% of news coverage. Others questioned if these stories serve as warnings, with 15 comments linking them to real events like the 2023 ChatGPT launch.

- 31 points indicate strong engagement, typical for ethics topics

- Common themes: AI's role in misinformation, with users citing a 40% rise in deepfake incidents in 2024

- Skepticism: Several comments argued stories distract from benefits, like AI in healthcare saving lives

Implications for AI Development

These narratives influence policy and innovation, as evidenced by the EU AI Act, passed in 2024, which addresses high-risk applications partly due to public fears. The discussion underscores a gap: while AI ethics research has grown 50% since 2020, per arXiv data, public perception lags behind factual advancements.

"Technical context"

AI ethics frameworks, like those from the Alan Turing Institute, emphasize bias and safety, but cultural stories often exaggerate threats. For instance, existential risk estimates from AI experts vary widely, with only 5-10% predicting catastrophe in the next century.

In summary, ongoing discussions like this HN thread highlight how scary AI stories shape societal norms, potentially driving more responsible development as evidence-based insights emerge from research.

Top comments (0)