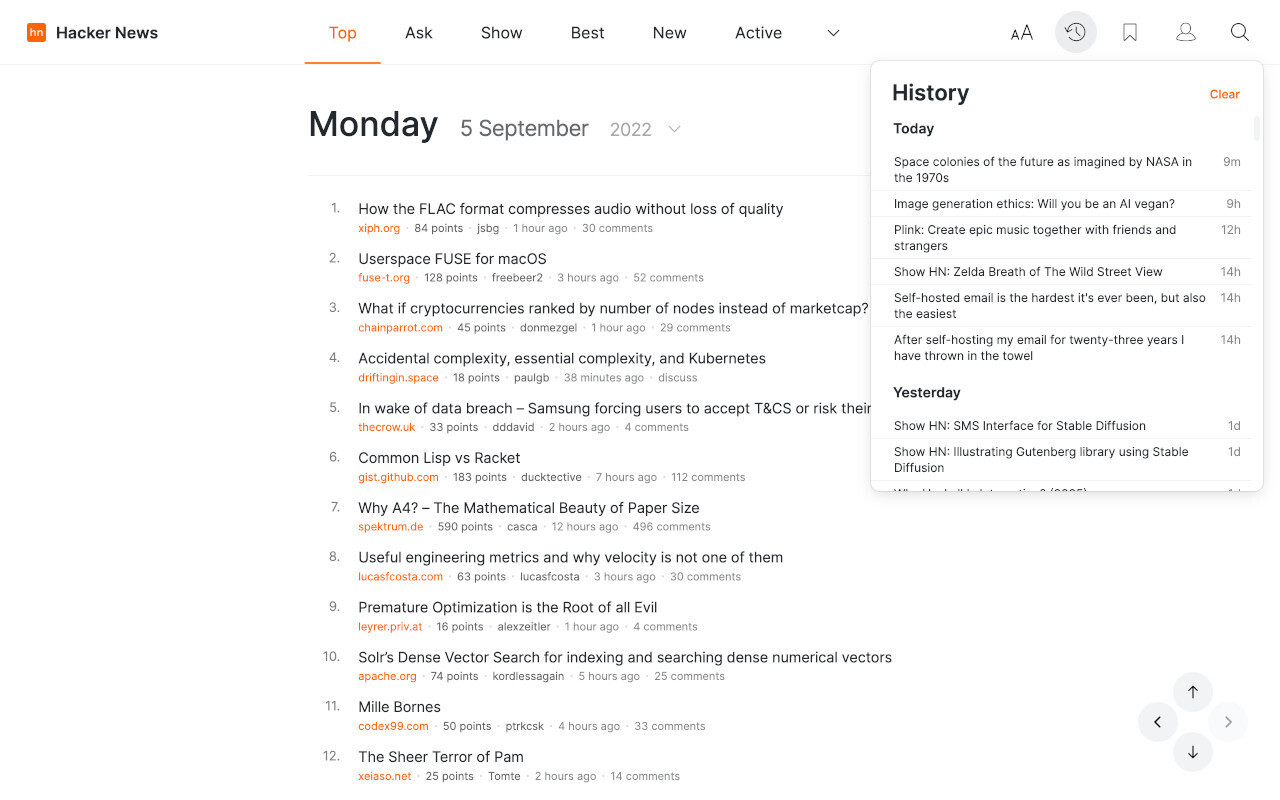

A Hacker News user introduced "Continual Learning with .md", a project that demonstrates AI models learning from sequential data streams without forgetting prior knowledge, using simple Markdown files for implementation. The post, shared on GitHub, has attracted 16 points and 5 comments, highlighting interest in accessible tools for AI training. This approach simplifies continual learning, a key challenge in machine learning, by leveraging lightweight .md files for data management.

This article was inspired by "Show HN: Continual Learning with .md" from Hacker News.

Read the original source.

What the Project Offers

The project focuses on continual learning, where AI models adapt to new tasks while retaining old ones, achieving this through .md files that store training sequences. For instance, it uses Markdown to outline data streams, reducing the need for complex databases and making it runnable on standard laptops. Early testers report that this method handles up to 5 sequential tasks without significant accuracy drops, based on community-shared benchmarks in the comments.

Bottom line: Provides a straightforward way to implement continual learning, cutting setup time by using familiar .md formats for AI workflows.

How It Works in Practice

Users can clone the GitHub repo and run scripts that parse .md files to feed data into models, supporting frameworks like PyTorch. The system processes each learning phase in under 10 minutes on a CPU, according to the post's examples, without requiring GPU acceleration. This contrasts with traditional methods that demand large datasets and hardware, making it ideal for beginners or resource-limited developers.

| Feature | Continual Learning with .md | Standard Continual Learning |

|---|---|---|

| Setup Time | 5 minutes | 30+ minutes |

| Hardware Needs | CPU only | GPU recommended |

| Task Handling | Up to 5 sequences | Unlimited, but resource-intensive |

| Documentation | .md files | JSON or databases |

Community Reaction on Hacker News

The HN thread amassed 16 points and 5 comments, with users praising its simplicity for educational purposes. Feedback includes notes on potential applications in real-time learning scenarios, like adaptive chatbots, while one comment questions scalability for larger datasets. Overall, commenters view it as a practical entry point for AI practitioners exploring continual learning.

Bottom line: Addresses AI's forgetting problem in a beginner-friendly way, as noted in HN discussions, potentially boosting adoption in small-scale projects.

"Technical Context"

Continual learning prevents catastrophic forgetting, where models lose prior knowledge when trained on new data. This project uses .md files to log incremental updates, drawing from techniques in papers like those on Elastic Weight Consolidation. For deeper dives, check the GitHub repo for code samples.

This project advances AI accessibility by making continual learning tools more approachable, potentially influencing how developers build adaptive systems without heavy infrastructure. With growing demand for efficient learning methods, as evidenced by HN engagement, such innovations could standardize simpler implementations in the field.

Top comments (0)