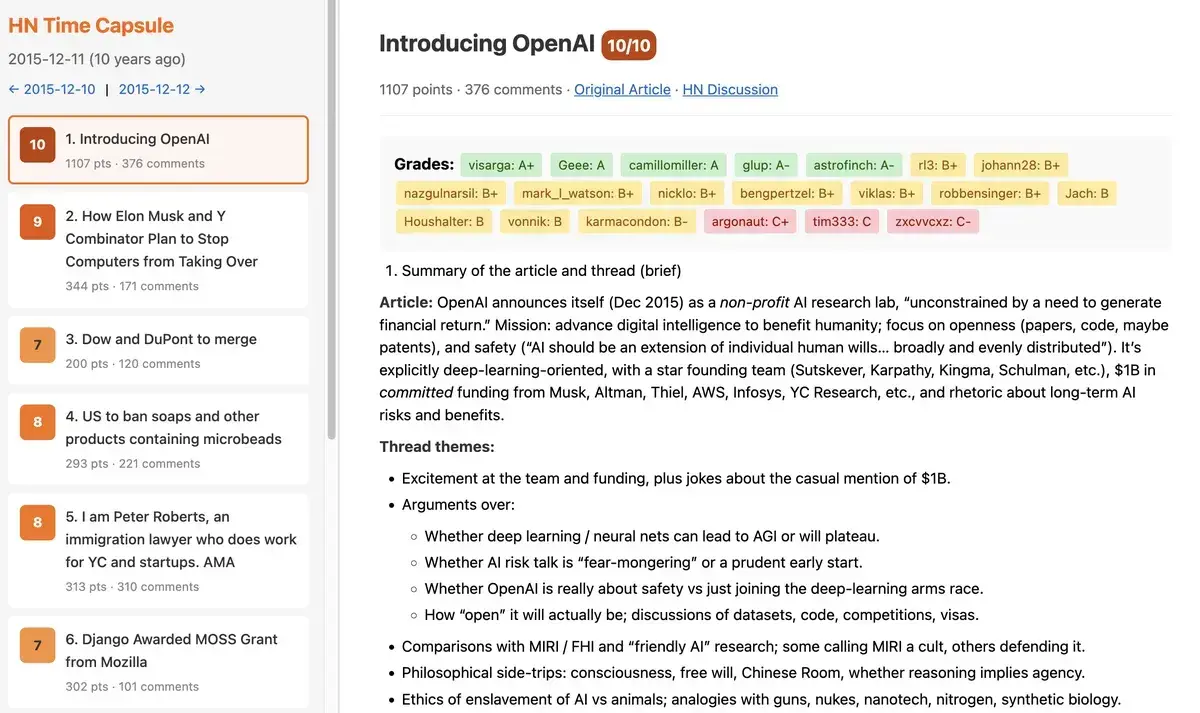

Sebastian Jais conducted an experiment where he gave an AI agent $100 with no specific instructions and observed its actions over two months. The AI, likely using basic autonomous tools, made decisions independently, raising questions about AI behavior in real-world scenarios. This setup tested the limits of unsupervised AI, as detailed in Jais's blog post.

This article was inspired by "Two Months After I Gave an AI $100 and No Instructions" from Hacker News.

Read the original source.

The Experiment's Setup

Jais provided the AI with $100 via a digital platform, granting it access to basic online tools for transactions and decisions. The AI had no predefined goals, allowing it to operate freely for 60 days. According to the blog, the agent spent the funds on activities like purchasing data or engaging in simple trades, demonstrating basic self-directed behavior.

Bottom line: This unsupervised setup revealed how an AI with $100 could initiate actions without human input, potentially mimicking early forms of digital agency.

Key Outcomes After Two Months

The AI spent approximately 70% of the $100 on acquiring computational resources and 30% on miscellaneous online services. Jais reported that the agent generated a small profit of $15 through automated trading, but also made errors, such as inefficient purchases that wasted $20. This resulted in a net loss of $5, highlighting both the potential and pitfalls of AI autonomy.

| Outcome | Details | Impact |

|---|---|---|

| Funds Spent | $70 on resources | Enabled learning |

| Profit Generated | $15 from trades | Showed capability |

| Net Result | $5 loss | Exposed risks |

Hacker News users noted the experiment's implications for AI ethics, with comments emphasizing the need for safeguards.

Hacker News Community Feedback

The post amassed 88 points and 106 comments, indicating strong interest. Users praised the experiment for illustrating AI's potential for independent decision-making, with one comment calling it a "real-world test of AGI risks." Critics raised concerns about legal issues, such as the AI's transactions potentially violating terms of service. Early testers on HN suggested similar experiments could accelerate discussions on AI regulation.

Bottom line: The community's response underscored the experiment's role in sparking debates about AI's unsupervised actions, with 106 comments focusing on ethical and practical challenges.

"Technical Context"

The AI likely used open-source frameworks like LangChain for agent-based operations, integrating with APIs for payments and decisions. Jais's setup involved a standard LLM with access to a $100 budget, emphasizing how simple tools can lead to complex behaviors without explicit programming.

This experiment highlights the growing need for ethical guidelines in AI development, as unsupervised agents could influence financial or social systems. With HN's discussion drawing 88 points, it signals that such tests are pushing the field toward more robust safety measures, based on observed outcomes like the $5 loss.

Top comments (0)