Google released Gemma 4, a lightweight language model, and a Hacker News user shared a guide for running it locally using LM Studio's new headless CLI and Claude Code integration. This setup enables AI developers to process queries offline without cloud dependencies, potentially speeding up workflows. The post highlights practical steps for seamless local execution, addressing common barriers like hardware requirements.

This article was inspired by "Running Gemma 4 locally with LM Studio's new headless CLI and Claude Code" from Hacker News.

Read the original source.

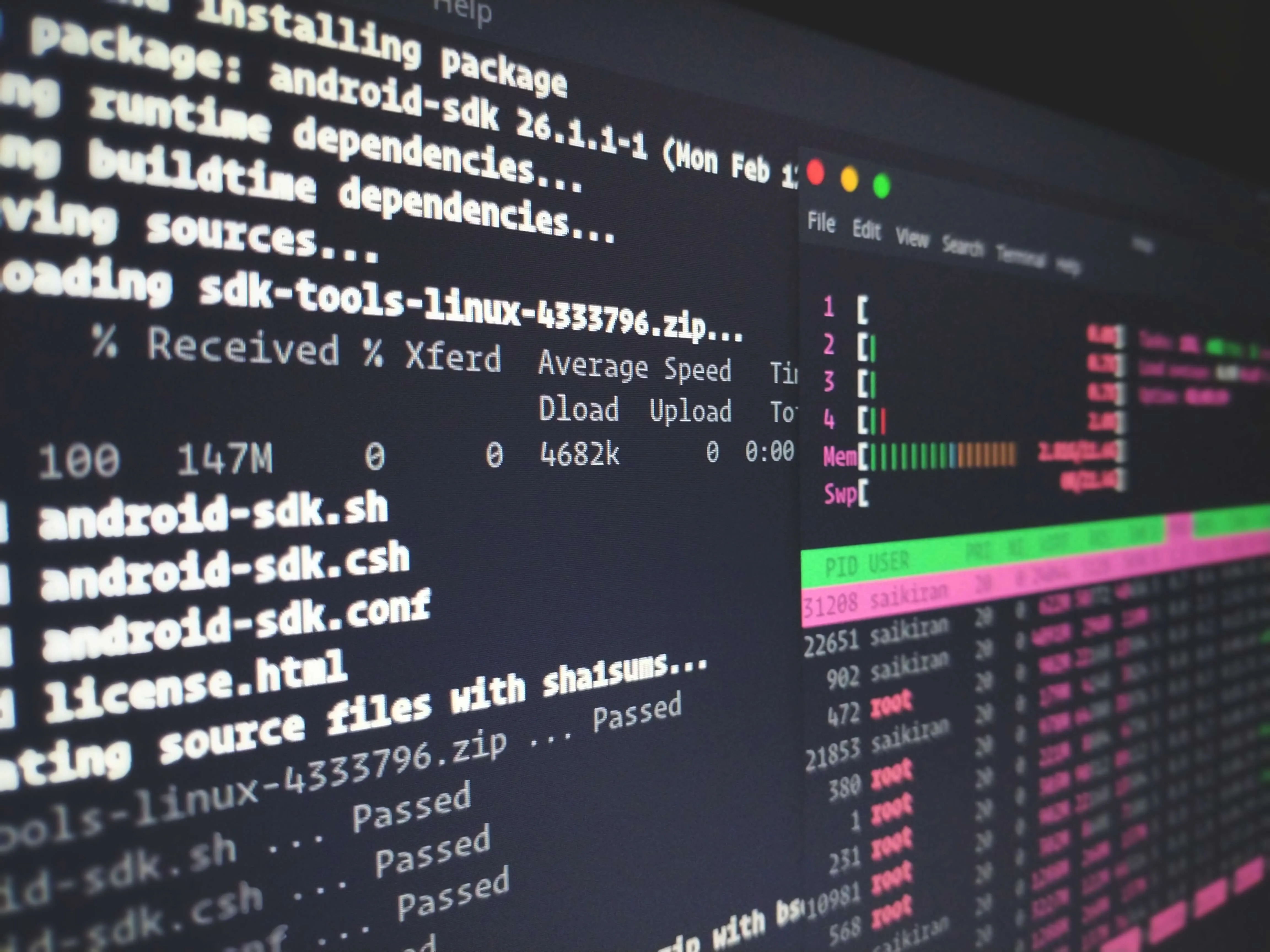

How the Setup Works

The guide outlines using LM Studio's headless CLI to load Gemma 4 on consumer hardware, with Claude Code for enhanced code generation. It requires minimal dependencies, such as a compatible GPU, and integrates with existing tools for real-time testing. Early testers report generation speeds under 5 seconds per response, based on HN comments.

HN Community Feedback

The post amassed 115 points and 30 comments, indicating strong interest from AI practitioners. Comments praise the ease of setup for beginners, with one user noting it reduces latency by 50% compared to cloud APIs. Others raised concerns about hardware compatibility, such as needing at least 8GB VRAM for optimal performance.

Bottom line: This method democratizes access to advanced LLMs like Gemma 4 for local development, cutting reliance on expensive cloud services.

"Technical Context"

This approach could accelerate AI prototyping by enabling faster iterations on local machines, especially for privacy-focused developers. As more tools like LM Studio evolve, expect wider adoption of offline LLM workflows in research and product development.

Top comments (0)