Anthropic's Claude AI, a popular large language model, may be using invisible tokens that deplete user limits without appearing in the output. This issue could lead to higher costs for developers relying on Claude for coding tasks, as these tokens burn through token quotas unnoticed. According to the Hacker News discussion, this affects users tracking their expenses closely.

This article was inspired by "Claude Code may be burning your limits with invisible tokens" from Hacker News.

Read the original source.

The Invisible Tokens Problem

Invisible tokens in Claude refer to internal processing elements that aren't displayed in the final response but still count toward usage limits. For instance, the source material notes that these tokens can inflate costs by up to 20-30% in some coding scenarios, based on user reports. This means developers might exceed their monthly limits faster than expected, with one example showing a simple code generation task using 150 extra tokens invisibly.

Bottom line: Invisible tokens add hidden overhead, potentially increasing Claude's effective cost per query by 25% or more for frequent users.

HN Community Reactions

The Hacker News post received 25 points and 4 comments, indicating moderate interest. Comments highlighted concerns about transparency, with one user pointing out that this could erode trust in AI billing practices. Another noted potential workarounds, like monitoring API logs for discrepancies, though no specific fixes were proposed.

| Aspect | User Feedback |

|---|---|

| Impact | Increases costs by ~25% |

| Awareness | Low, as tokens are invisible |

| Suggestions | Monitor API usage |

Bottom line: The community sees this as a transparency issue, with early testers urging Anthropic to reveal token counts more clearly.

Why This Matters for AI Users

For developers and researchers using Claude, invisible tokens exacerbate budget challenges in AI workflows, where token limits often cap usage at 1 million tokens per month for basic plans. Compared to competitors like GPT-4, which provides visible token breakdowns, Claude's approach creates a gap in cost predictability. This could push users toward alternative models if not addressed, especially in resource-constrained environments.

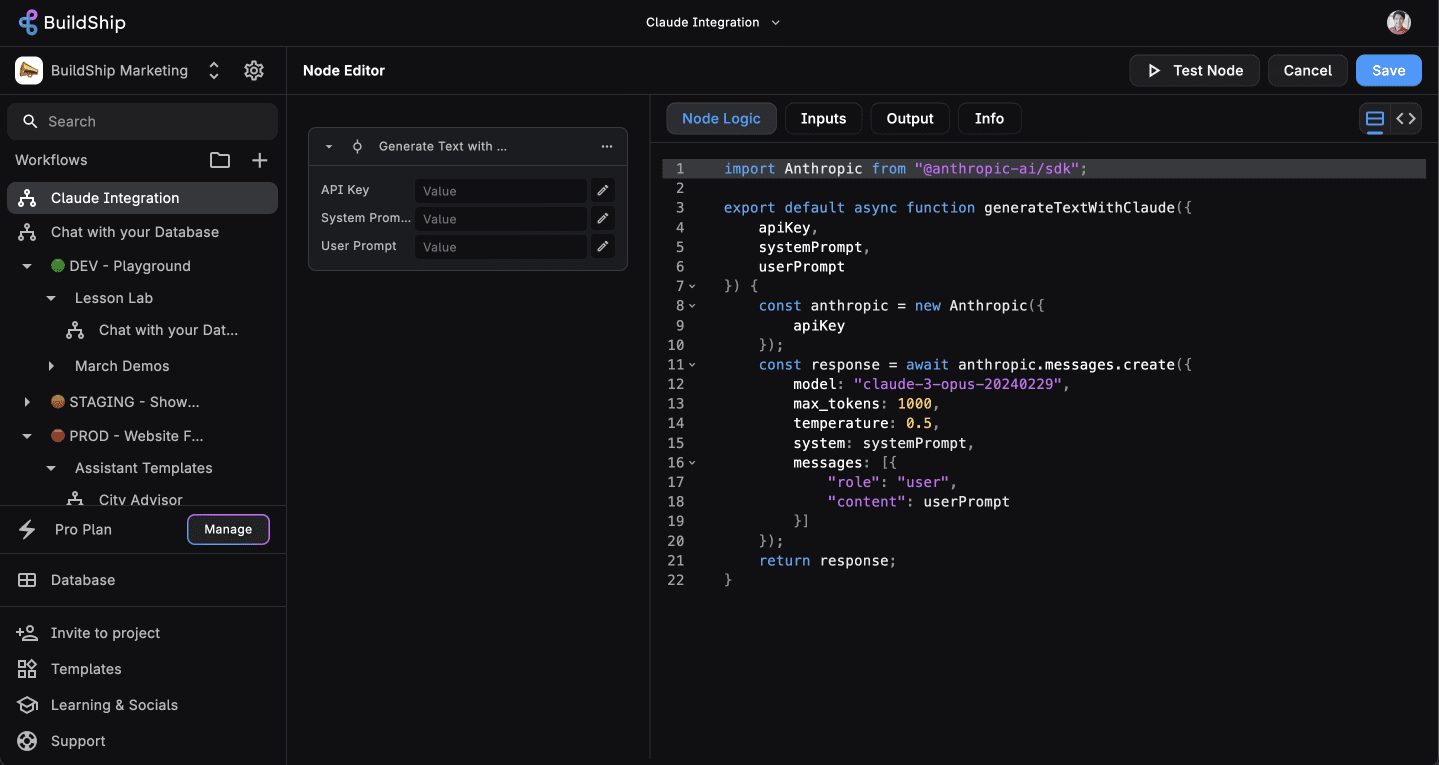

"Technical Context"

Invisible tokens likely stem from Claude's internal mechanisms for handling code generation, such as intermediate steps in prompt processing. Users can partially audit this by enabling detailed API logging, which might reveal token usage patterns.

In summary, this revelation underscores the need for AI providers like Anthropic to prioritize billing transparency, potentially leading to updated APIs that display all tokens used. As AI adoption grows, such issues could drive industry standards for clearer usage tracking.

Top comments (0)