A new tool called Grainulator restricts AI responses to only include claims with verifiable citations, addressing misinformation in AI outputs. This approach could transform how we trust AI-generated content, as highlighted in a recent Hacker News discussion.

This article was inspired by "The tool that won't let AI say anything it can't cite" from Hacker News.

Read the original source.

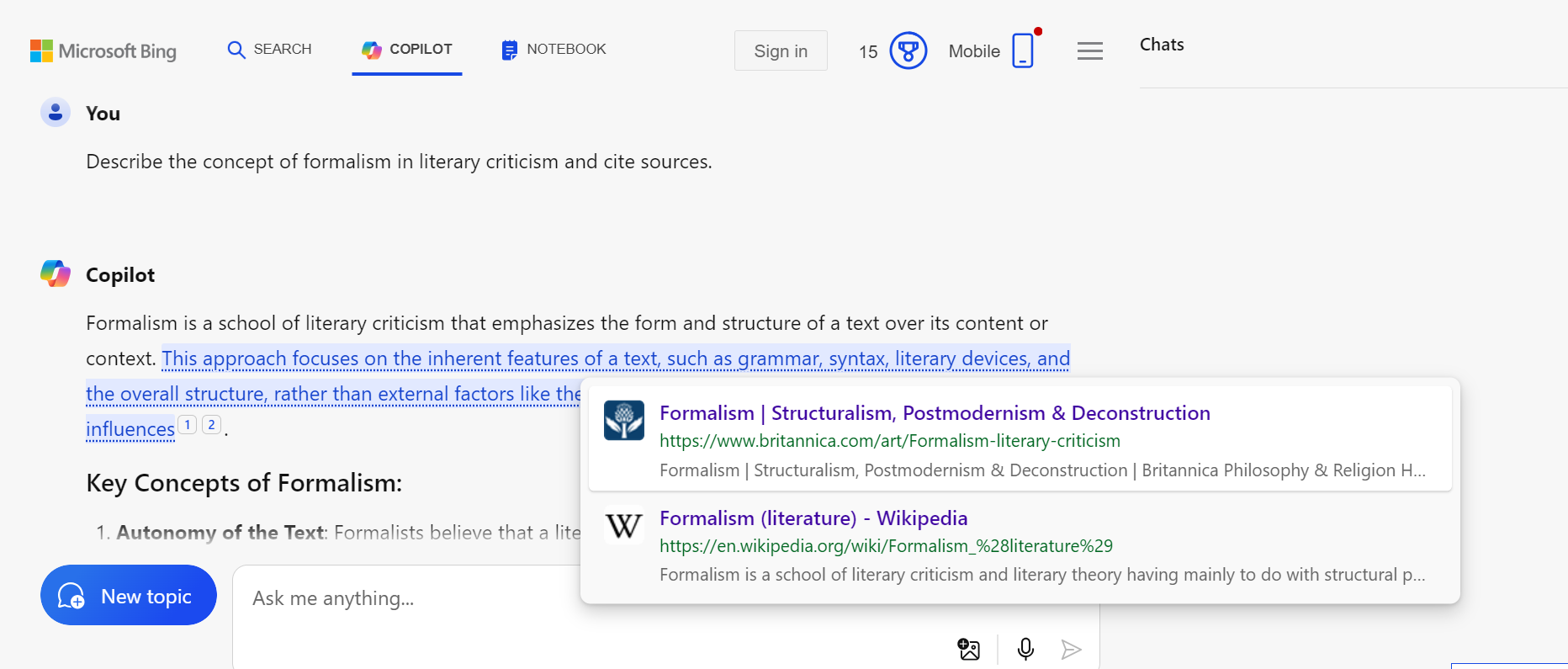

How It Works

Grainulator integrates with AI models to verify that every factual statement includes a source. For instance, it might block or flag responses lacking citations, using automated checks against databases or references. The HN post notes this as a step toward reliable AI, with the tool available via its GitHub repository.

Community Reactions on Hacker News

The discussion garnered 34 points and 14 comments, indicating moderate interest. Comments praised it for tackling AI's accuracy issues, such as reducing hallucinations in chatbots, while others raised concerns about potential slowdowns in response times. One user suggested applications in education, where cited AI could aid learning.

Bottom line: Grainulator makes AI outputs more trustworthy by mandating citations, potentially setting a new standard for ethical AI tools.

Why This Matters for AI Ethics

Tools like this fill a gap in AI development, where models often generate unverified information. Existing AI ethics frameworks emphasize source verification, but Grainulator provides a practical implementation, as evidenced by its HN traction. For developers, this means easier integration of citation checks, reducing risks in applications like news summarization.

"Technical Context"

This innovation could accelerate adoption of verifiable AI, especially in fields like journalism, by embedding citation requirements into core workflows. As AI tools evolve, those prioritizing evidence-based outputs will likely gain prominence in professional settings.

Top comments (0)