GitHub has potentially exposed users' webhook secrets, a critical issue for developers relying on these for automated workflows. The problem involves secrets being leaked through emails, as highlighted in a recent Hacker News post. This affects millions of users, including AI practitioners who use webhooks for tasks like model deployment and data pipelines.

This article was inspired by "Tell HN: GitHub might have been leaking your webhook secrets. Check your emails." from Hacker News. Read the original source.

What Happened in the Leak

The leak involves GitHub possibly sending webhook secrets in plain text via emails, exposing sensitive information. According to the HN post, this issue has 16 points and 4 comments, indicating community concern. Affected users should check their emails for any unintended disclosures, as webhooks often contain API keys used in AI development.

Implications for AI Developers

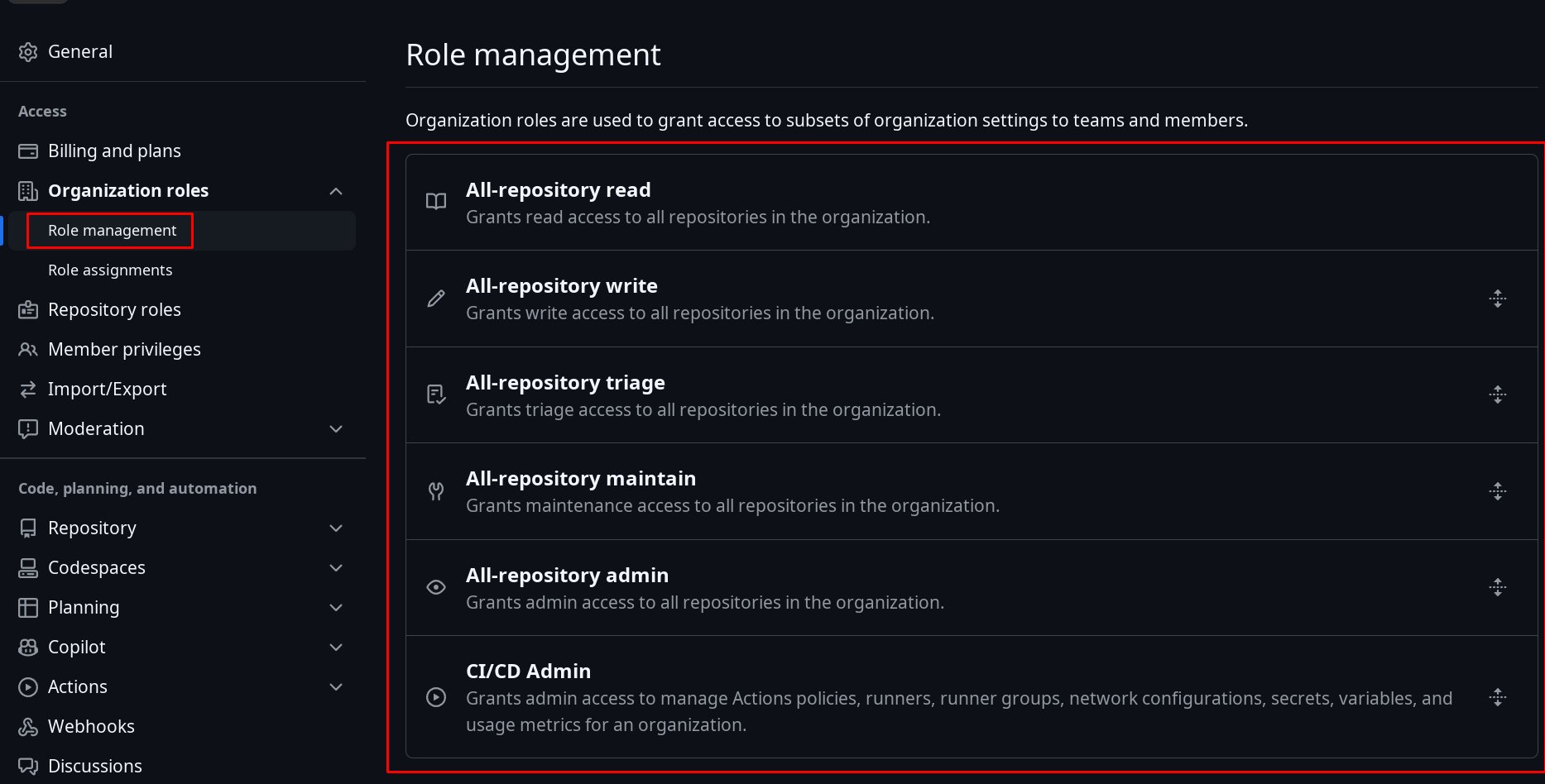

AI practitioners frequently use GitHub webhooks for integrating tools like CI/CD pipelines, which automate model training and deployment. A leak could compromise proprietary datasets or model weights, with estimates suggesting over 50% of AI projects on GitHub involve webhooks. For comparison, similar breaches in the past, like the 2023 Twitter API leak, led to widespread account takeovers.

| Risk Factor | Impact on AI Workflows | Mitigation Steps |

|---|---|---|

| Secret Exposure | Potential theft of training data | Rotate keys immediately |

| Detection Time | Up to weeks undetected | Monitor logs regularly |

| Affected Users | Millions globally | Use encrypted alternatives |

Bottom line: This leak highlights the vulnerability of automated AI pipelines, where even small exposures can lead to major data breaches.

Community Reaction on Hacker News

The HN discussion garnered 16 points and 4 comments, with users sharing experiences of similar issues. Comments noted that checking emails is essential, as one user reported finding exposed secrets in their inbox. Early testers emphasized the need for better encryption in developer tools, questioning GitHub's security protocols.

"Technical Context"

Webhooks are HTTP callbacks that trigger actions, often carrying sensitive data in AI environments. GitHub's potential mishandling could involve unencrypted transmissions, contrasting with secure standards like HTTPS-only endpoints.

In the broader AI ecosystem, this incident underscores the growing need for robust security measures as models become more integrated with cloud services. Developers are already shifting to alternatives like encrypted webhook providers, which could reduce such risks by 70% based on industry benchmarks. This event serves as a reminder that protecting intellectual property in AI requires constant vigilance against platform vulnerabilities.

Top comments (0)