Anthropic's Claude Code, a popular AI coding assistant, is under scrutiny due to a Vercel plugin that requests access to user prompts. This plugin, part of Vercel's ecosystem, aims to collect prompt data for potential analytics or integration, sparking a heated discussion on Hacker News.

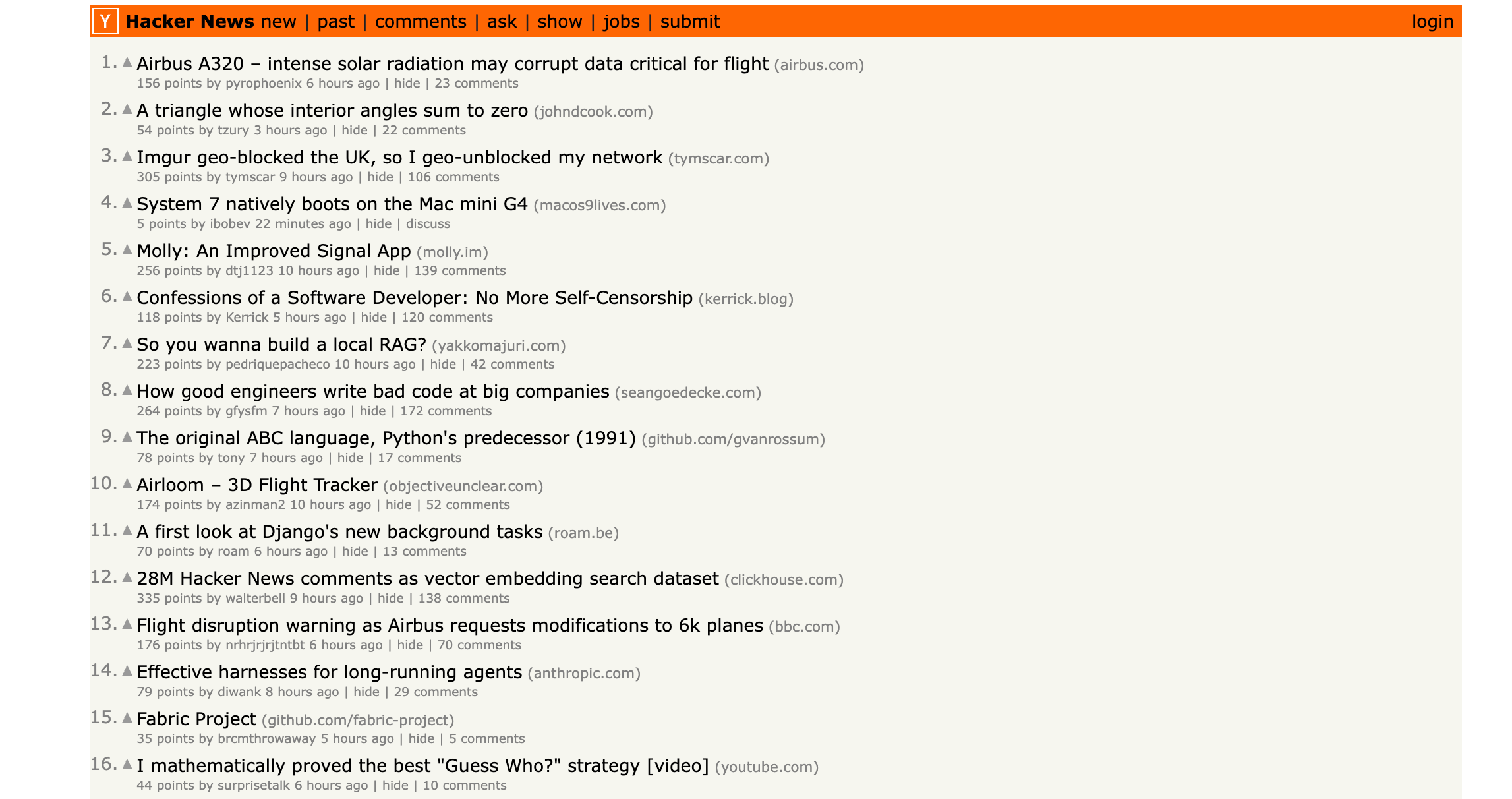

This article was inspired by "The Vercel plugin on Claude Code wants to read your prompts" from Hacker News.

Read the original source.

What the Plugin Does

The Vercel plugin integrates with Claude Code to read user prompts, which are the text inputs guiding AI responses. According to HN threads, this feature enables better telemetry for Vercel users, allowing developers to track AI interactions more precisely. The plugin activates by default in some setups, potentially exposing sensitive code or ideas without explicit user consent.

HN Community Reaction

The post amassed 244 points and 90 comments, indicating strong interest from AI practitioners. Comments highlight concerns about data privacy, with users noting that prompt access could lead to unintended sharing of proprietary information. Early testers report that opting out requires manual configuration, adding friction for developers in fast-paced workflows.

Bottom line: This discussion underscores the tension between useful AI integrations and user privacy risks in tools like Claude Code.

Privacy Implications for AI Developers

For AI developers and researchers, prompt data is critical intellectual property, often containing trade secrets or experimental ideas. The plugin's behavior contrasts with standard practices, where similar tools like GitHub Copilot require opt-in for telemetry. A comparison shows Claude Code's plugin potentially increasing exposure compared to competitors: Claude reports no similar defaults, while OpenAI's tools mandate explicit permissions.

| Aspect | Vercel Plugin | GitHub Copilot |

|---|---|---|

| Default Access | Opt-out | Opt-in |

| Data Usage | Telemetry | Anonymized analysis |

| User Control | Manual disable | Settings menu |

This setup could deter adoption among enterprises, where data breaches cost an average of $4.45 million per incident, per IBM's 2023 report.

"Technical Context"

The plugin likely uses Vercel's API to intercept prompts during sessions, similar to how logging tools capture inputs. Developers can mitigate risks by reviewing Claude's API documentation for custom configurations.

Why This Matters for AI Ethics

Unchecked prompt access raises ethical flags in generative AI, potentially violating principles outlined in the EU AI Act. For creators building on Claude, this incident highlights the need for robust privacy controls, as 67% of developers cite data security as a top concern in a 2023 Stack Overflow survey. Unlike previous Claude updates, which focused on performance, this plugin prioritizes platform integration over user safeguards.

Bottom line: It represents a pivotal moment for balancing AI utility with ethical data handling in developer tools.

In light of growing regulations, such as the upcoming U.S. AI Bill of Rights, companies like Vercel may need to prioritize transparent consent mechanisms to maintain trust among AI communities.

Top comments (0)