Why Qwen3-Next?

Traditional language models often struggle with maintaining context over long documents. Qwen3-Next addresses this by supporting ultra-long contexts up to 1 million tokens, enabling efficient processing of extensive content without loss of coherence.

What It Is

Qwen3-Next is a next-generation AI model featuring a hybrid attention mechanism, combining Gated DeltaNet and Gated Attention. It utilizes a high-sparsity MoE architecture, activating only a small subset of experts per token, making it parameter-efficient and fast.

Key Features

- Hybrid attention combining linear and traditional attention

- High-sparsity MoE architecture with 512 experts and only 10 activated per token

- Native support for 262K tokens, extendable to 1M tokens via YaRN scaling

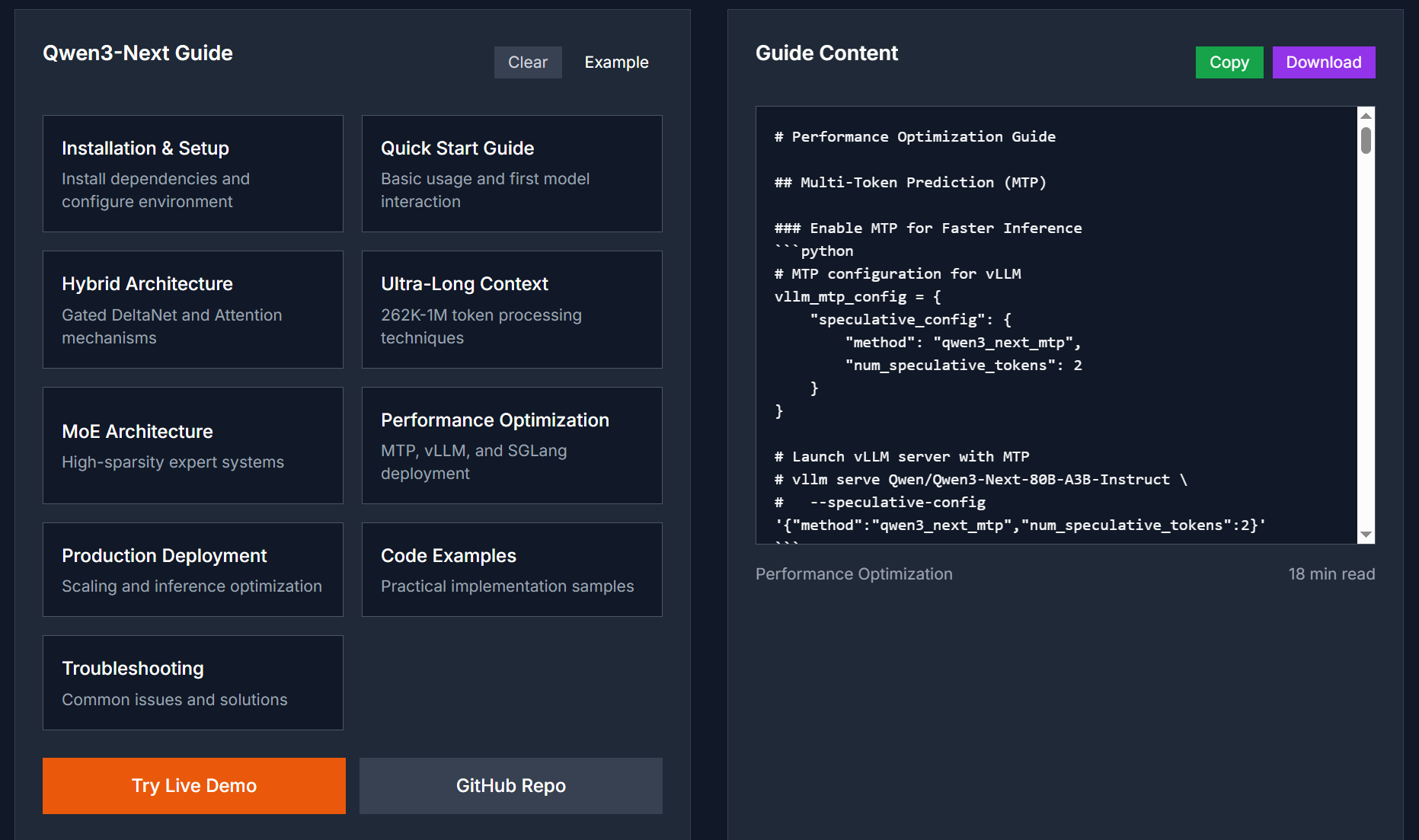

- Multi-Token Prediction (MTP) for faster inference

- Open-source under Apache-2.0 license

Performance

Despite having 80 billion parameters, Qwen3-Next activates only 3 billion per token, offering efficiency comparable to traditional models with fewer parameters. It achieves state-of-the-art performance on various benchmarks, including ultra-long document analysis and complex reasoning tasks.

Use Cases

- Processing and analyzing documents up to 1 million tokens

- Code generation and refactoring across multiple programming languages

- Advanced reasoning tasks requiring long-term memory and context

- Building sophisticated chatbots and virtual assistants with long conversation memory

- Content summarization, translation services, and information extraction

Future Roadmap

- Enhanced fine-tuning capabilities for domain-specific applications

- Improved support for additional languages and dialects

- Expansion of multimodal interaction features

- Continuous performance optimization and benchmarking

Conclusion

Qwen3-Next offers a powerful solution for applications requiring the processing of extensive content with maintained context. Its innovative architecture and efficiency make it ideal for developers, researchers, and businesses seeking advanced AI capabilities.

Top comments (0)