A Fresh Alert from Hacker News

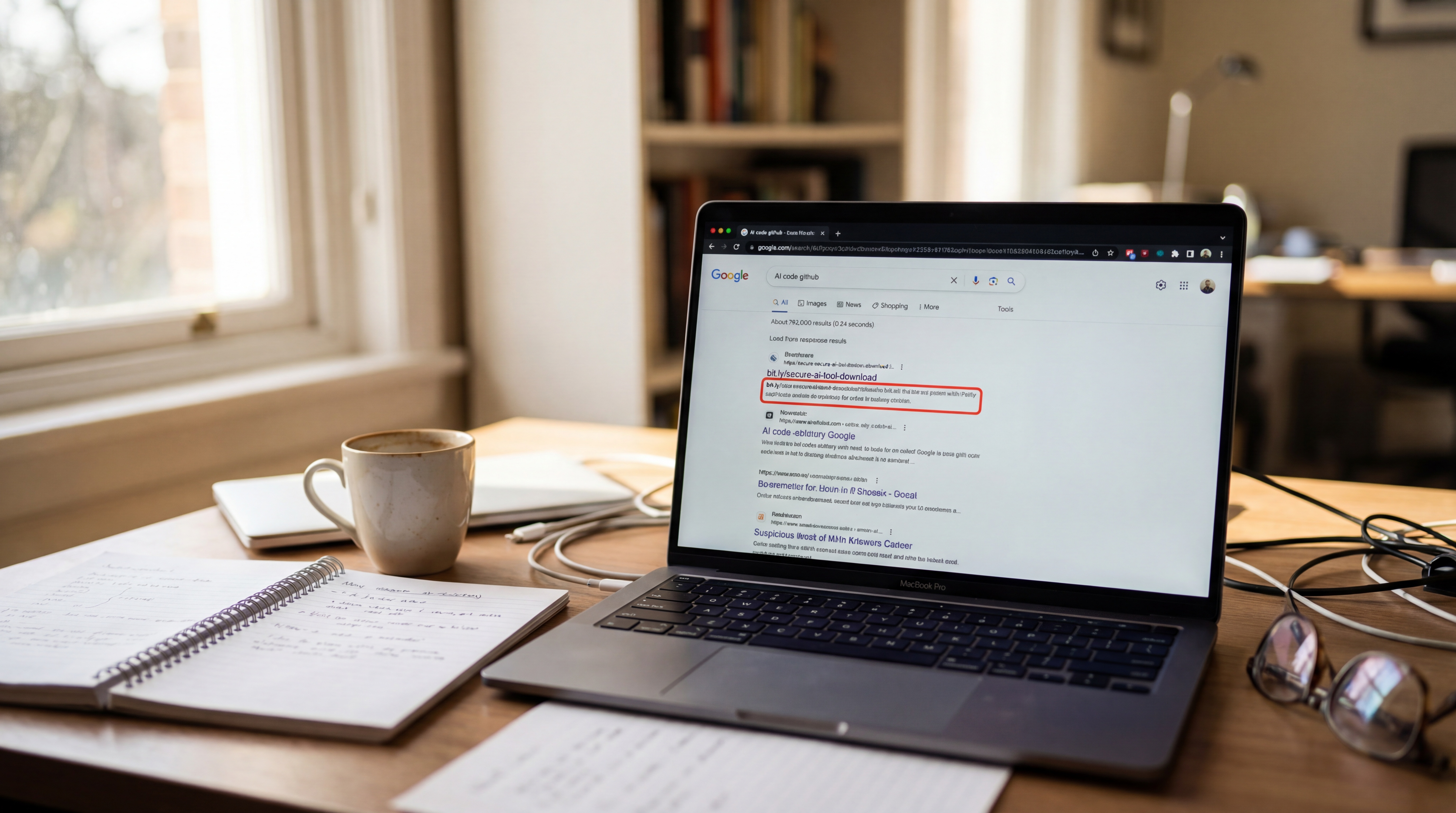

Hacker News users have flagged a serious security concern: the top Google search result for "Claude Code" leads to malicious content. Claude, Anthropic's popular AI language model, is often searched for code-related queries, but this top result spreads malware or phishing attempts. Last year, similar issues surfaced with AI tools, underscoring ongoing risks in search engine visibility.

This article was inspired by "PSA: Top Google Result for Claude Code Is Malicious" from Hacker News.

Read the original source.

The Malicious Link Explained

The problematic result appears to be a deceptive website mimicking legitimate Claude resources, potentially hosting scripts that steal data or install unwanted software. According to the Hacker News thread, this site ranks first due to SEO manipulation, a common tactic in cyber attacks. Early reports indicate it targets developers seeking Claude's API code snippets, exploiting the model's growing popularity.

Community Feedback on Hacker News

Hacker News discussions, with 32 points and 10 comments, show users expressing frustration and concern over search engine reliability. Feedback suggests this isn't isolated, as similar malicious results have appeared for other AI tools like ChatGPT. Commenters recommend verifying sources before clicking, highlighting how such incidents erode trust in online AI communities.

Risks for AI Developers

For AI practitioners, this issue amplifies vulnerabilities in code sharing and model access, potentially leading to data breaches or compromised projects. The original post notes that clicking the link could expose personal information, with risks amplified for those handling sensitive code. Compared to standard phishing, this targets niche AI searches, making it a calculated threat in the generative AI space.

How to Stay Safe

Users can mitigate risks by using advanced search operators or checking URLs manually before visiting. Tools like VirusTotal have flagged similar sites, and community tools such as Reddit threads offer verified Claude resources. This approach emphasizes the need for cross-verification in AI workflows, especially as search algorithms evolve.

In light of this incident, AI platforms like Anthropic may need to enhance official documentation visibility, ensuring safer access for developers and reducing reliance on general search engines.

Top comments (0)