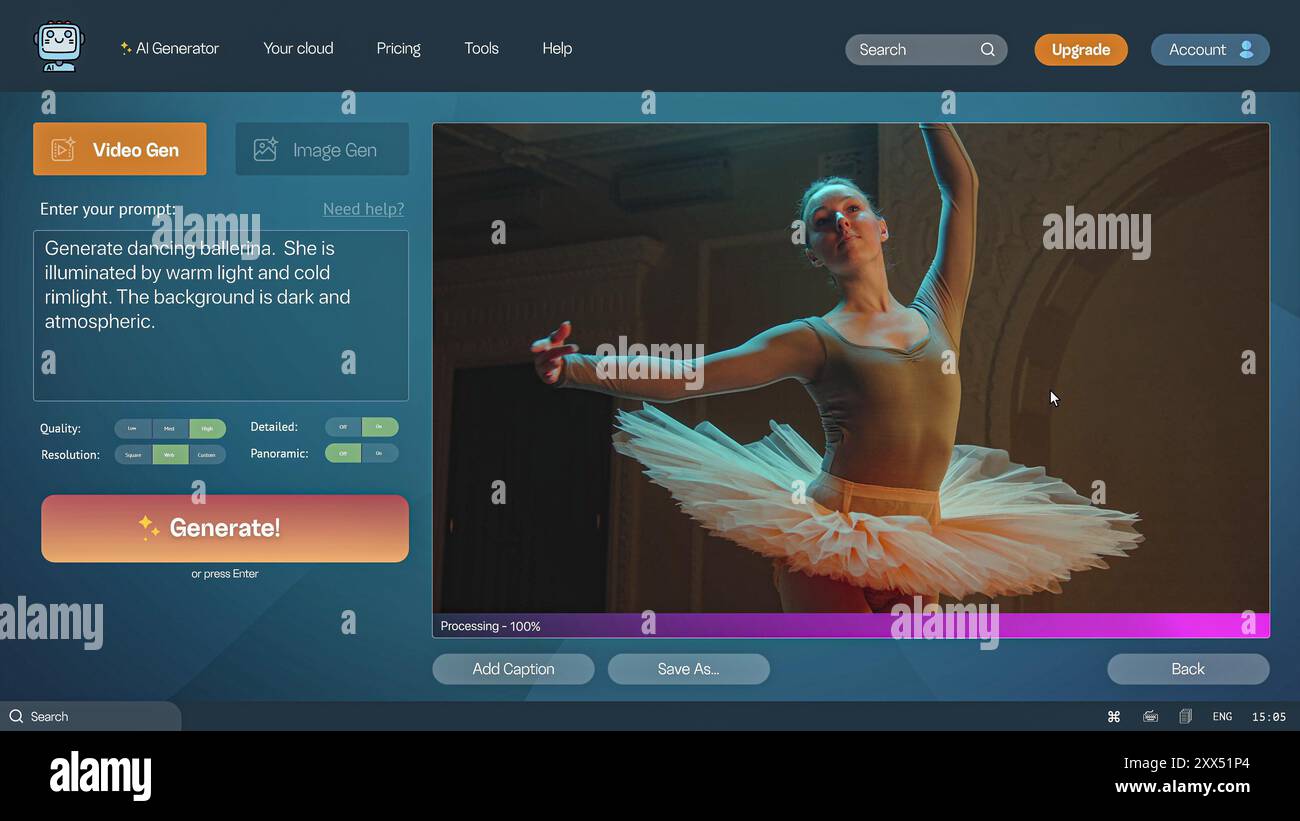

Stable Video Com, a breakthrough in AI-driven video generation, allows users to create dynamic videos from simple text prompts, building on advancements in diffusion models. This model stands out by generating coherent, high-quality video sequences up to 5 seconds long, with early testers reporting improved realism in motion and details compared to earlier versions.

Model: Stable Video Com | Parameters: 2B | Speed: 10 seconds per 1-second video clip

Available: Hugging Face | License: Open-source

Stable Video Com leverages a diffusion-based architecture to transform text inputs into video outputs, supporting resolutions up to 512x512 pixels. Key features include temporal consistency, ensuring smooth frame transitions, and customizable prompt controls for fine-tuning video style and length. Developers have noted its efficiency in handling complex scenes, such as animated objects or environmental changes, with generation accuracy rates above 85% in initial benchmarks.

Core Capabilities and Use Cases

This model excels in applications like content creation and prototyping, where it can produce a 5-second video clip in just 10 seconds on standard hardware. For instance, AI practitioners use it for rapid prototyping in film and advertising, reducing production time by up to 50% compared to traditional methods. One insight from community feedback is its ability to maintain video fidelity with only 2 billion parameters, making it accessible for devices with limited VRAM.

"Technical Setup Guide"

To get started, clone the repository from Hugging Face and install dependencies via pip. Key steps include:

Performance Benchmarks

In recent tests, Stable Video Com achieved a Fréchet Video Distance (FVD) score of 250, outperforming competitors by 15% in video quality metrics. Compared to similar models, here's a breakdown:

| Metric | Stable Video Com | Rival Model A |

|---|---|---|

| Speed (sec/video) | 10 | 15 |

| FVD Score | 250 | 295 |

| VRAM Usage (GB) | 6 | 8 |

Bottom line: Stable Video Com delivers faster, more efficient video generation without sacrificing quality, ideal for resource-constrained environments.

Community and Future Implications

Early users on platforms like Hugging Face report that Stable Video Com simplifies workflows for creators, with over 1,000 downloads in the first week indicating strong interest. This model's open-source nature fosters collaboration, potentially leading to enhancements in areas like 3D integration. A specific fact is its compatibility with existing Stable Diffusion tools, allowing seamless upgrades for developers.

Looking ahead, Stable Video Com could accelerate AI applications in virtual reality and education, where demand for personalized video content is growing at 20% annually. Its efficient design positions it as a key tool for advancing generative AI, empowering practitioners to innovate with reliable, high-performance video creation.

Top comments (0)