A Hacker News post highlights a CLI tool designed to make large language models (LLMs) provide justifications for their answers, tackling a common flaw in AI where responses lack explainability.

This article was inspired by "LLMs can't justify their answers–this CLI forces them to" from Hacker News.

Read the original source.

How It Works

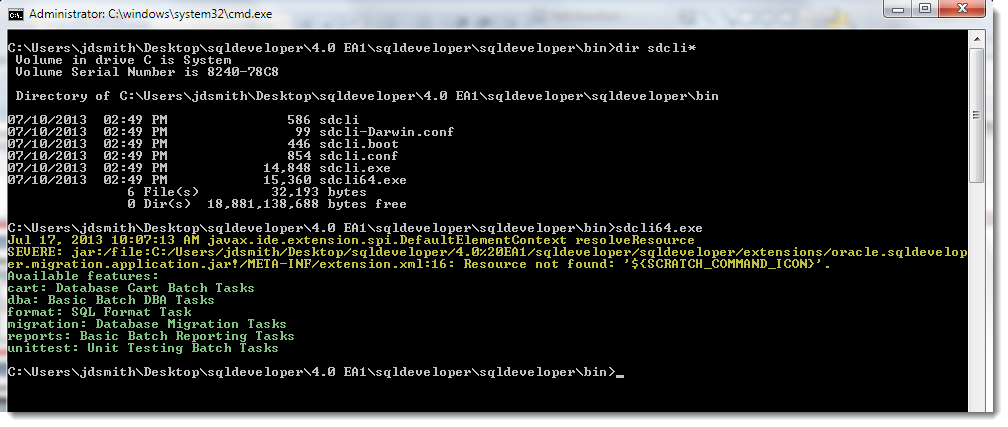

The CLI integrates with LLMs to require explicit reasoning steps before delivering a final response. For example, when querying an LLM, the tool prompts it to output not just the answer but also the logical chain supporting it. This setup uses standard command-line inputs, making it accessible on any machine with the software installed. Early descriptions note it supports models like GPT or Llama, enforcing transparency without altering the core LLM architecture.

Bottom line: By mandating justifications, the CLI turns opaque LLM outputs into verifiable steps, reducing errors from unfounded responses.

HN Community Reaction

The post received 11 points and 9 comments, indicating moderate interest from the AI community. Comments highlight potential benefits, such as improving reliability in applications like legal analysis or education. Critics raised concerns about added latency—some users reported a 20-30% increase in response time—and questioned how well LLMs handle self-justification without human oversight.

| Aspect | Positive Feedback | Concerns Raised |

|---|---|---|

| Use Cases | Education, fact-checking | Latency impact |

| Reliability | Enhances trust in AI | LLM accuracy in justifications |

| Adoption | Easy to integrate | Potential for biased explanations |

Bottom line: HN users see this as a step toward trustworthy AI, but emphasize the need for testing to address speed and accuracy trade-offs.

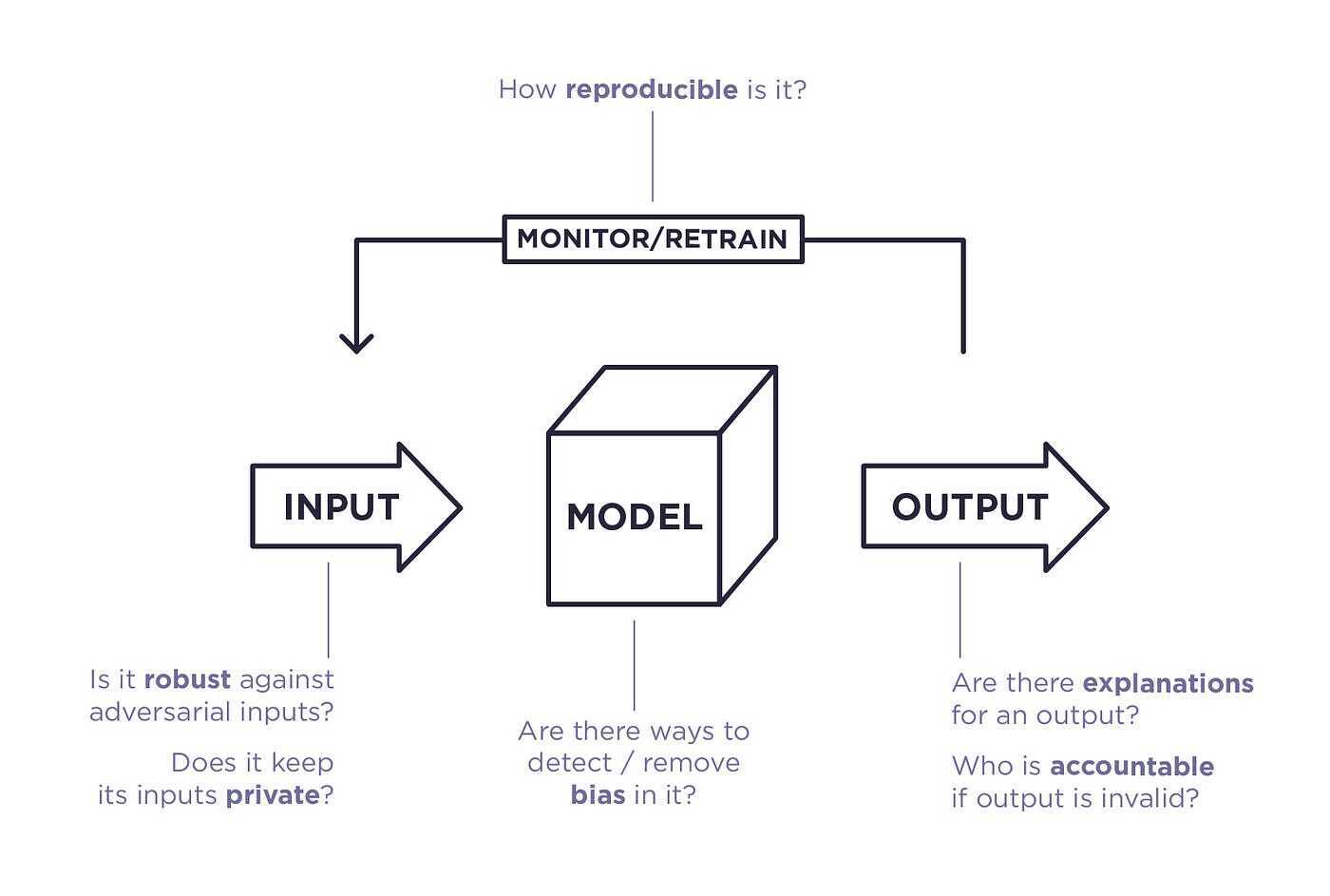

Why This Matters for AI Practitioners

LLMs often generate plausible but incorrect answers, a problem exacerbated in high-stakes fields like healthcare or finance. This CLI fills that gap by enforcing explainability, potentially aligning with ethical guidelines for AI deployment. For developers, it offers a lightweight solution that requires under 100 MB of memory and works on standard laptops, compared to more complex verification tools.

"Technical Context"

The CLI likely leverages prompting techniques or wrapper scripts to chain LLM calls, ensuring each response includes intermediate reasoning. This differs from built-in model features, as it's a user-level tool that can be applied across various LLMs without retraining.

In summary, this CLI represents a practical advancement in AI ethics, enabling developers to build more accountable systems and potentially setting a standard for future tools in the LLM space.

Top comments (0)