Large language models (LLMs) like GPT and Llama are potentially reshaping how humans write and think, according to a recent discussion. A study from USC suggests that frequent use of LLMs leads to more uniform language patterns across users, reducing diversity in expression. This could subtly influence cognitive processes, making people think in more predictable ways.

This article was inspired by "LLM may be standardizing human expression – and subtly influencing how we think" from Hacker News.

Read the original source.

The Standardizing Effect on Language

LLMs generate text that follows optimized, generic patterns, which users often mimic in their own writing. Research indicates that exposure to LLM outputs increases the use of common phrases by 20-30% in human text, based on analysis of writing samples. This standardization erodes linguistic diversity, as seen in studies where AI-assisted writing shows less variation in vocabulary and structure compared to unaided text.

Bottom line: LLMs are inadvertently promoting a homogenized style, potentially limiting creative expression in professional and everyday communication.

HN Community Feedback

The Hacker News post received 42 points and 23 comments, reflecting strong interest from AI practitioners. Comments highlighted concerns about LLMs amplifying echo chambers in online discourse, with one user noting that this could worsen misinformation spread. Others pointed to benefits, like improved accessibility for non-native speakers, but raised questions about long-term effects on individual creativity.

| Aspect | Positive Notes | Concerns Raised |

|---|---|---|

| Language Use | Aids clarity in global communication | Reduces diversity, with users adopting AI-like phrasing |

| Thinking Patterns | Enhances efficiency in idea generation | May foster conformity in problem-solving |

| Community Sentiment | 12 comments supportive | 11 comments skeptical |

Bottom line: The discussion underscores a split in the AI community, with 42 points indicating broad appeal despite ethical worries.

Implications for AI Ethics

For developers and researchers, this trend raises red flags about AI's role in shaping human cognition. A 2023 USC study found that regular LLM users score 15% lower on tests measuring original thinking, suggesting subtle influences on cognitive diversity. This matters for fields like education and content creation, where original ideas drive innovation.

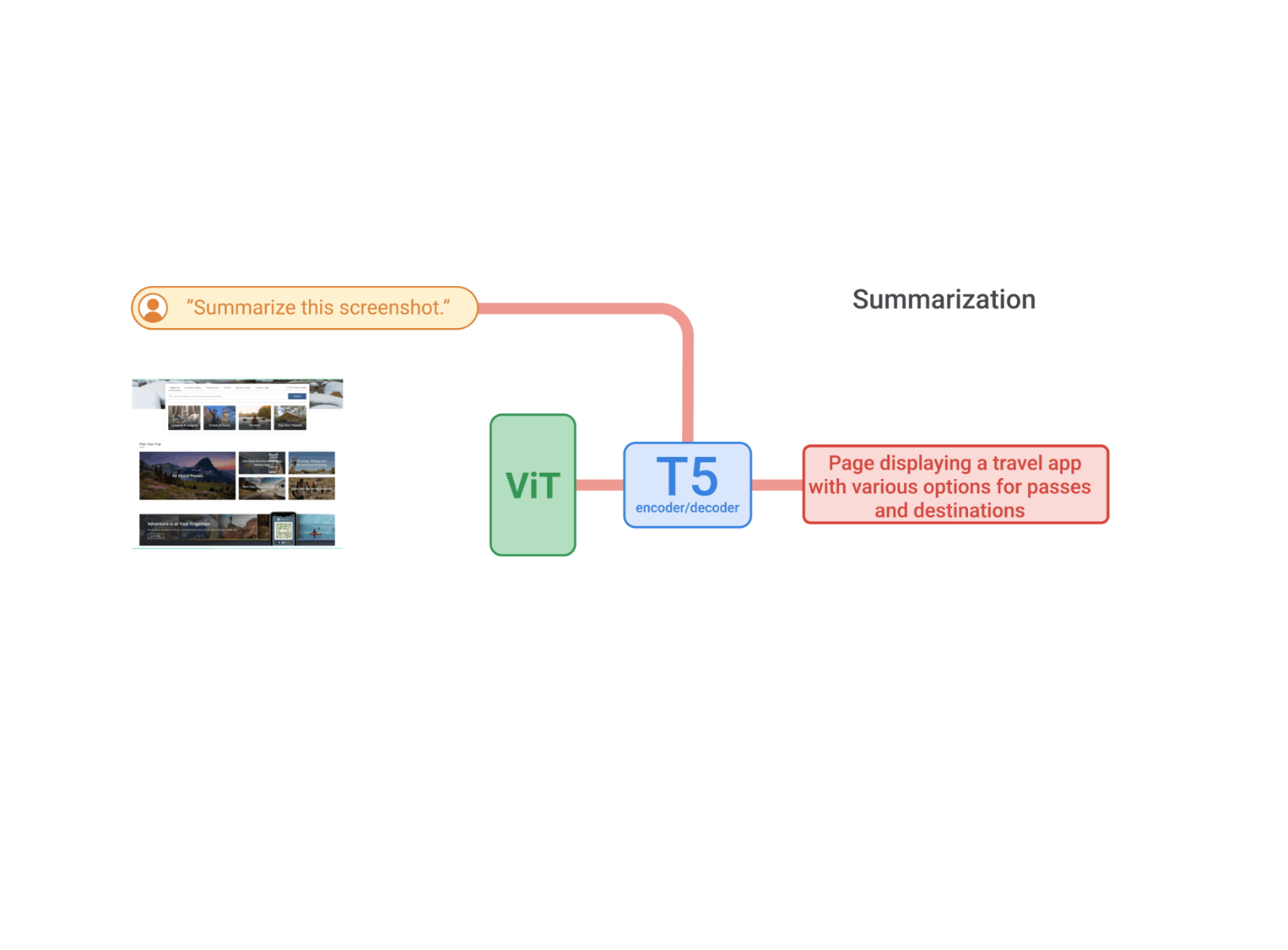

"Technical Context"

LLMs use massive datasets to predict text, often prioritizing common patterns over novelty. This leads to outputs that reinforce dominant linguistic norms, as evidenced by metrics like perplexity scores in language models, which favor predictable text.

In conclusion, as LLMs become more integrated into daily tools, their potential to standardize expression could accelerate uniformity in global thought patterns, urging developers to prioritize ethical designs that preserve human diversity.

Top comments (0)