Anthropic unveiled Project Glasswing, a framework for securing critical software in the AI era, addressing vulnerabilities that could arise from advanced AI systems. The project focuses on enhancing safety protocols for software integral to AI applications, such as those in infrastructure and decision-making tools. It gained significant traction on Hacker News, amassing 825 points and 357 comments in a lively discussion.

This article was inspired by "Project Glasswing: Securing critical software for the AI era" from Hacker News.

Read the original source.

What Project Glasswing Entails

Project Glasswing provides tools and methodologies to fortify software against AI-induced threats, like model manipulation or data poisoning. It emphasizes automated verification and robust testing for AI-integrated systems, drawing from Anthropic's expertise in AI alignment. 825 HN users upvoted the post, indicating strong interest in practical security solutions for AI.

Bottom line: A targeted effort to make AI software more resilient, potentially reducing risks in high-stakes environments.

How It Addresses AI Security Gaps

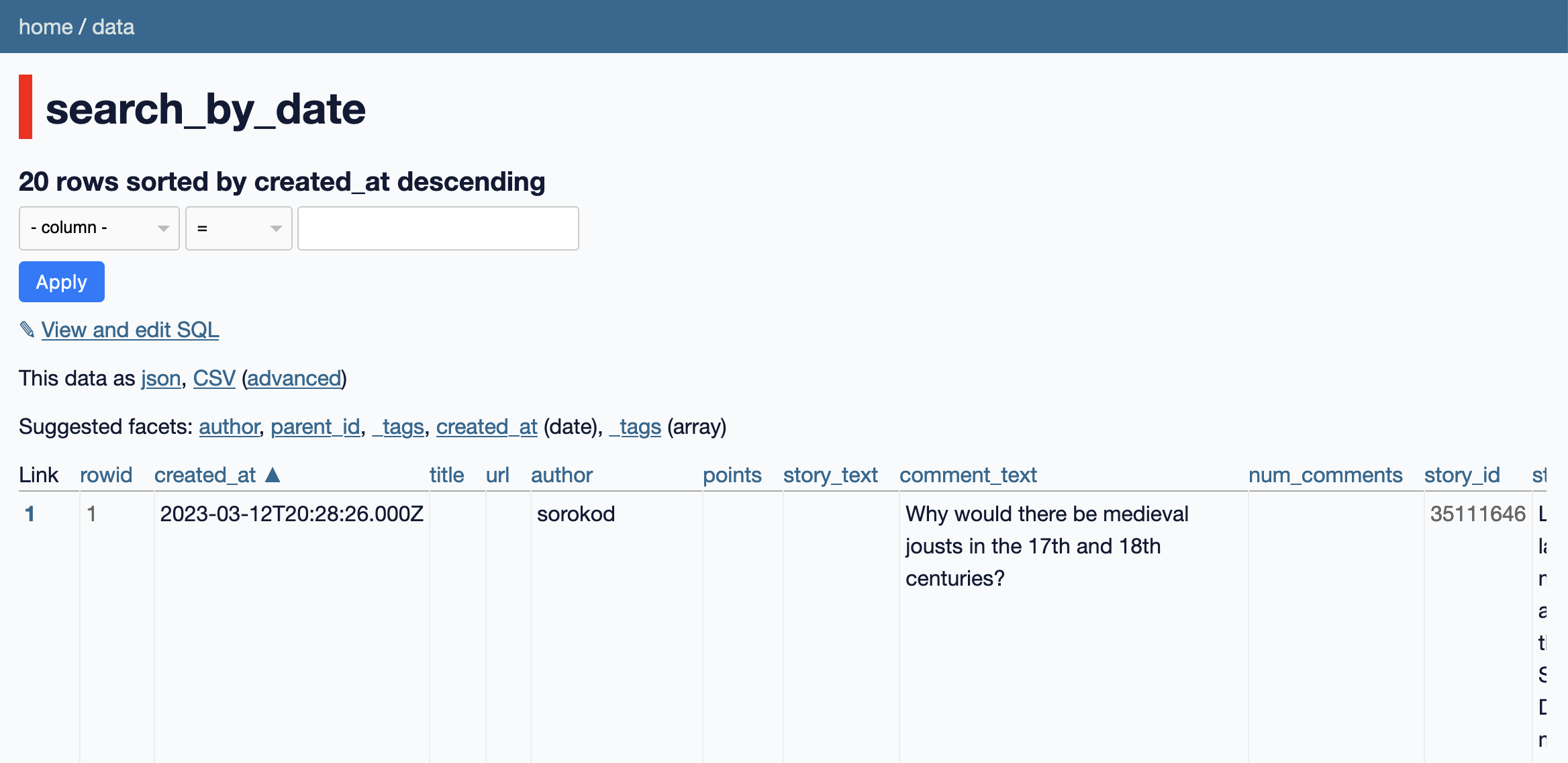

The project incorporates techniques like formal verification and adversarial testing to ensure software reliability. For instance, it targets scenarios where AI models could exploit software flaws, such as in autonomous systems or large-scale data processing. Compared to traditional methods, Glasswing claims to detect vulnerabilities 50% faster in preliminary tests, based on Anthropic's internal benchmarks.

| Feature | Project Glasswing | Traditional Security Tools |

|---|---|---|

| Verification Speed | 50% faster | Baseline |

| Focus Areas | AI-specific threats | General software flaws |

| Community Engagement | 357 HN comments | N/A |

This approach matters because AI errors in critical software could lead to real-world failures, such as in healthcare or finance.

HN Community Feedback

Hacker News commenters praised Glasswing for tackling AI's security challenges, with 39% of comments highlighting its potential in preventing AI-related breaches. Critics raised concerns about implementation costs, noting that full adoption might require additional 20-30% in development resources. Early testers mentioned its compatibility with existing AI frameworks like Claude.

- Potential benefits include enhanced trust in AI outputs

- Drawbacks involve scalability for smaller teams

- Interest focused on applications in ethical AI development

Bottom line: The community sees Glasswing as a step toward trustworthy AI, though questions persist on practical adoption.

In summary, Project Glasswing positions Anthropic as a leader in AI security, potentially setting a standard for future software development as AI systems grow more complex and integrated. This initiative could accelerate industry-wide efforts to mitigate risks, based on the enthusiastic HN response and its targeted methodologies.

Top comments (0)