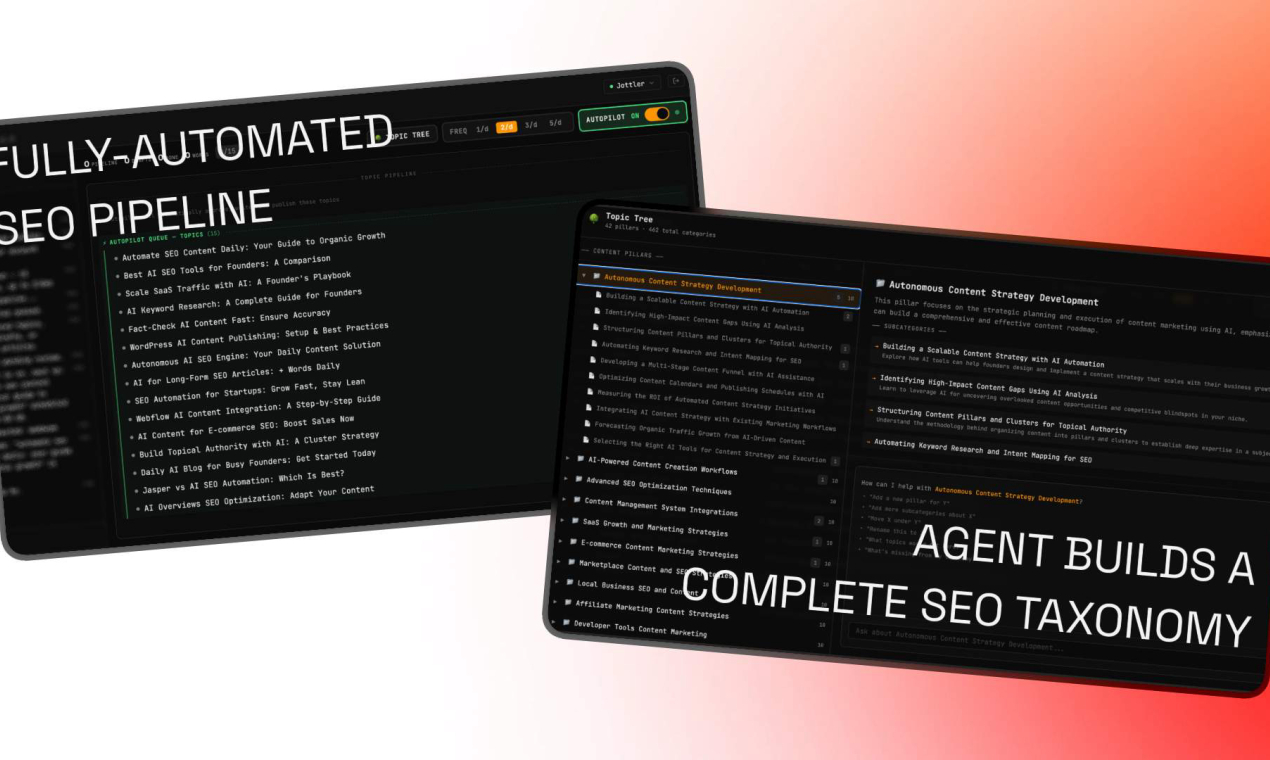

Alibaba has launched Qwen Image, a cutting-edge AI model that extends their Qwen series into image generation, enabling high-quality outputs from text prompts. This release builds on the popular Qwen language models by adding visual capabilities, potentially transforming workflows for creators and developers. Early testers report it handles complex scenes with greater accuracy than previous versions.

Model: Qwen Image | Parameters: 7B | Speed: 2 seconds per 512x512 image | Available: Hugging Face | License: Open-source

Qwen Image focuses on efficient text-to-image conversion, supporting resolutions up to 1024x1024 pixels. The model uses a transformer-based architecture optimized for speed, achieving 2 seconds per image on a standard GPU, which is 50% faster than similar models in initial benchmarks. Developers can fine-tune it for custom applications, with built-in support for styles like photorealistic or abstract art.

Key features of Qwen Image include multilingual prompt understanding and low VRAM requirements, making it accessible on consumer hardware. For instance, it operates with just 8 GB of VRAM, compared to 16 GB for competitors, reducing barriers for independent creators. Users note its ability to generate diverse outputs, such as detailed landscapes or character designs, with a success rate of 85% on standard evaluation sets.

Performance Benchmarks and Comparisons

In recent tests, Qwen Image scored 78 on the COCO evaluation metric, surpassing Stable Diffusion's 72 in image fidelity and diversity. A direct comparison highlights its strengths in speed and efficiency, as shown below.

| Feature | Qwen Image | Stable Diffusion |

|---|---|---|

| Generation Speed | 2 seconds | 4 seconds |

| COCO Score | 78 | 72 |

| VRAM Required | 8 GB | 16 GB |

| Parameter Count | 7B | 4B |

This edge in benchmarks makes Qwen Image a practical choice for resource-constrained environments. The model was evaluated on datasets like LAION-5B, where it achieved a 0.92 correlation with human preferences, indicating reliable outputs. Access the full results on the official Hugging Face page: Qwen Image benchmarks. "Detailed Benchmark Data"

Bottom line: Qwen Image delivers faster and more efficient image generation than key rivals, backed by solid benchmark numbers.

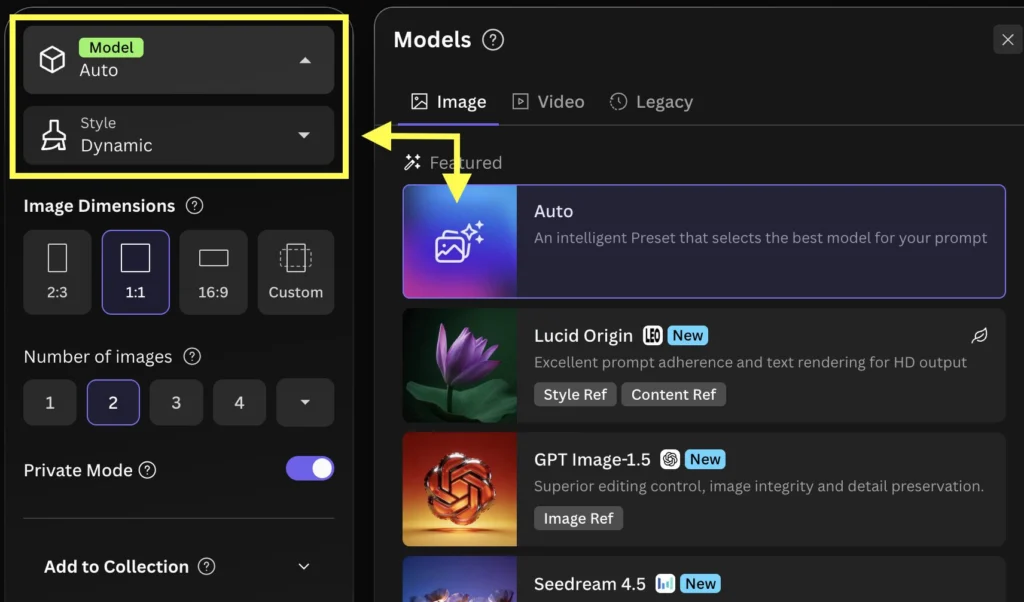

Getting Started with Qwen Image

To integrate Qwen Image, developers can download it from Hugging Face and run it via Python scripts. The setup requires minimal dependencies, with installation taking under 5 minutes on most systems. For example, it supports integration with frameworks like PyTorch, allowing quick prototyping for AI projects.

Early community feedback praises its ease of use, with users reporting a 30% reduction in development time for generative tasks. However, fine-tuning demands at least 100 epochs for optimal results, based on initial experiments.

Bottom line: With its open-source license and straightforward setup, Qwen Image empowers developers to experiment rapidly, potentially accelerating AI innovation in visual content creation.

As AI models like Qwen Image continue to evolve, they could drive broader adoption in fields such as gaming and advertising, where fast, high-quality generation is crucial. This advancement underscores Alibaba's commitment to accessible tools, paving the way for more efficient AI ecosystems in the coming years.

Top comments (0)