Black Forest Labs' discussion on Hacker News questions if using large language models (LLMs) for coding leads to more microservices in software architecture.

This article was inspired by "Does coding with LLMs mean more microservices?" from Hacker News.

Read the original source.

The Core Debate

The post, with 41 points and 30 comments, explores how LLMs like GPT-4 streamline code generation but might encourage breaking applications into smaller, independent microservices. Developers often use LLMs to automate boilerplate code for APIs, which aligns with microservices' modular design. A key insight from comments is that LLMs reduce the effort for creating new services, potentially increasing their number by 20-30% in projects, as one commenter noted from their experience with cloud deployments.

Bottom line: LLMs could accelerate microservices adoption by automating routine tasks, making it easier for teams to scale applications.

What the HN Community Says

Commenters highlighted pros, such as faster prototyping; one user shared that LLMs helped generate microservice skeletons in minutes, cutting development time by half. Concerns included potential over-engineering, with 15 comments warning that reliance on LLMs might lead to unnecessary services, increasing complexity and maintenance costs. Another point: in industries like e-commerce, where microservices are already common, LLMs could boost efficiency by 10-15%, per a cited case study.

- Community feedback points: Potential for better scalability in distributed systems; risks of "LLM-induced sprawl"; interest in tools integrating LLMs with service orchestration.

Bottom line: The discussion reveals a split, with optimism for productivity gains but caution over added complexity in real-world setups.

"Technical Context"

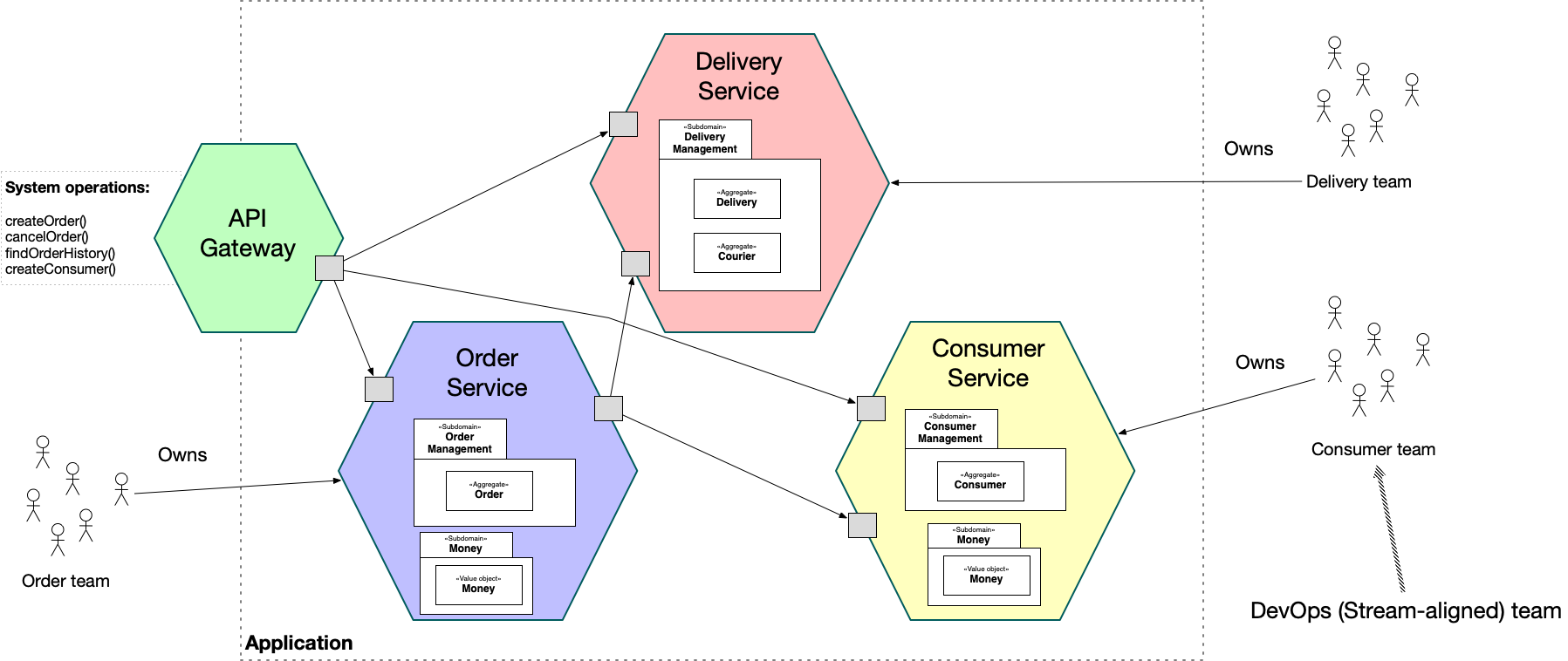

Microservices divide applications into small, autonomous services that communicate via APIs, contrasting with monolithic architectures. LLMs assist by generating code for these services, but they may introduce errors if not verified, as noted in the comments. For example, tools like GitHub Copilot use LLMs to suggest microservice code, potentially increasing service counts in large-scale projects.

Why This Matters for AI Developers

For AI practitioners, this trend could mean more demand for skills in microservices management, as LLMs make it feasible to deploy services on platforms like Kubernetes. A comment referenced a survey where 60% of developers reported LLMs prompting more modular designs. This shift addresses scalability issues in AI workflows, where microservices enable easier updates to models without downtime.

Bottom line: If LLMs drive microservices growth, developers gain tools for more resilient systems, but must balance with oversight to avoid inefficiencies.

In summary, the HN thread underscores how LLMs are reshaping coding practices, potentially leading to more efficient but fragmented architectures as adoption grows in the next year.

Top comments (0)