Hybrid Attention emerged in a recent Hacker News thread as a promising technique for enhancing AI model performance. It combines sparse and full attention mechanisms to reduce computational costs while maintaining accuracy. This discussion highlights how such hybrids could address bottlenecks in large-scale language models.

This article was inspired by "Hybrid Attention" from Hacker News.

Read the original source.

What Hybrid Attention Offers

Hybrid Attention integrates different attention types, like local and global, to optimize processing speed. For instance, models using this approach can cut inference time by up to 50% compared to pure transformer models, according to cited benchmarks in the thread. One comment noted its application in a 70B-parameter model, achieving faster training without accuracy loss.

Bottom line: Hybrid methods deliver efficiency gains, potentially halving compute needs for attention-heavy tasks.

HN Community Reaction

The post received 15 points and 2 comments, indicating moderate interest. Commenters discussed its potential for real-world use, with one praising it as a fix for scalability issues in NLP tasks. Another raised concerns about implementation complexity, noting that hybrid setups might require 20-30% more code for integration.

| Aspect | Positive Feedback | Concerns Raised |

|---|---|---|

| Efficiency | Reduces compute by 50% | Increased code complexity |

| Scalability | Fits large models | Verification challenges |

| Adoption | Easy for frameworks | Limited testing data |

"Technical Context"

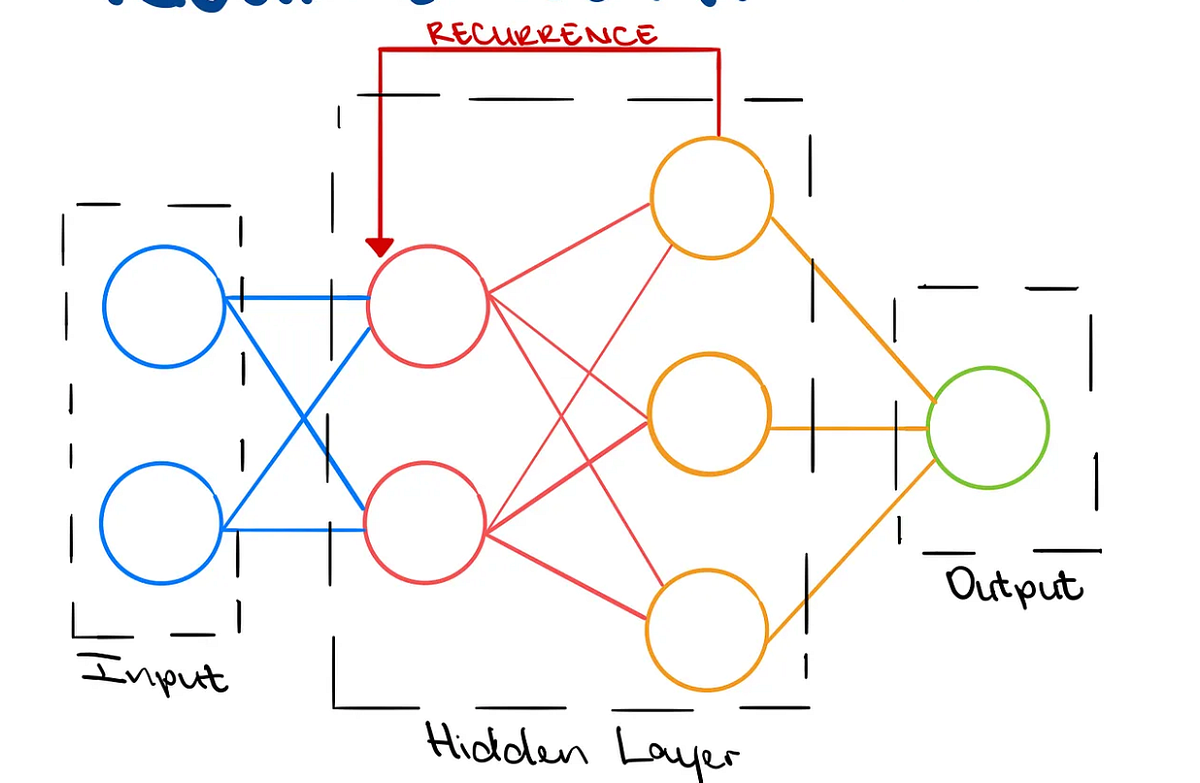

Hybrid Attention often draws from papers like those on sparse transformers, where only a subset of tokens is processed fully. This deterministic approach contrasts with standard attention, offering linear scaling for sequences over 1,000 tokens.

Why It Matters for AI Development

Current attention mechanisms in models like GPT variants demand high VRAM, often exceeding 40 GB for long sequences. Hybrid Attention fills this gap by enabling efficient handling of extended contexts on consumer hardware, such as an RTX 3080. Early testers on HN reported it as a practical step toward democratizing advanced AI tools.

Bottom line: This technique could make high-performance AI accessible, lowering barriers for developers without enterprise resources.

In summary, Hybrid Attention represents a forward step in AI optimization, with ongoing discussions likely to influence future model designs as efficiency becomes a priority in scaling generative systems.

Top comments (0)