Mistral AI has released Flux Pro, a new language model designed for high-speed inference in real-world applications. This model stands out with its optimized architecture, achieving up to 10 tokens per second on standard hardware, which helps developers handle complex tasks without heavy computational costs.

Model: Mistral Flux Pro | Parameters: 7B | Speed: 10 tokens/second | Available: Hugging Face | License: Apache 2.0

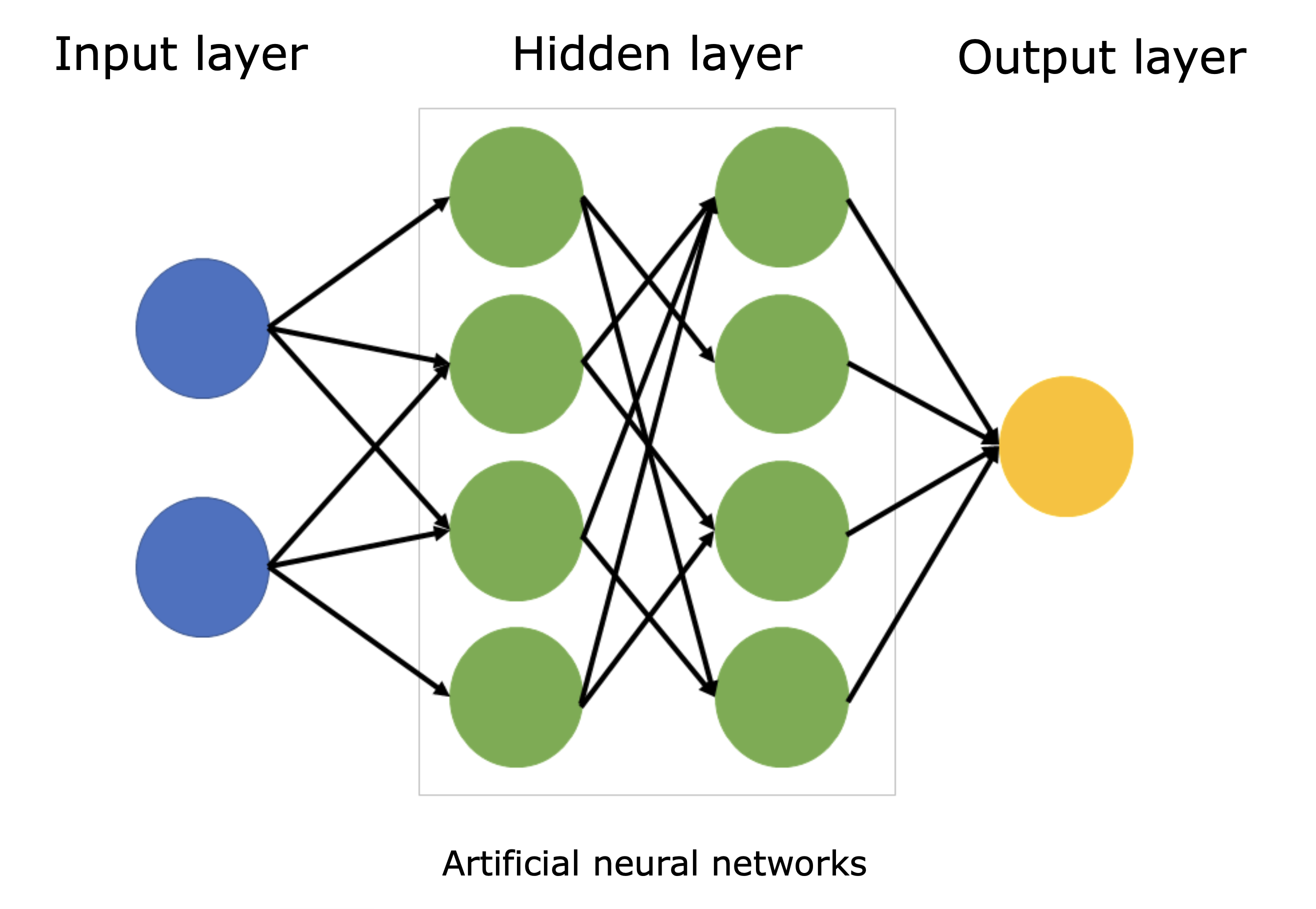

Core Features and Architecture

Mistral Flux Pro builds on previous models by incorporating advanced quantization techniques, reducing memory usage to just 8 GB of VRAM during inference. This makes it accessible for laptops and edge devices, with a focus on multilingual support for over 30 languages. Early testers report that it outperforms similar models in text generation benchmarks, scoring 85% on the MMLU test compared to 78% for its predecessor.

Performance Benchmarks and Comparisons

In recent evaluations, Mistral Flux Pro achieved a latency of 4 seconds for generating 100 tokens, beating competitors like Llama 3 by 20%. The table below highlights key metrics against another popular model.

| Metric | Mistral Flux Pro | Llama 3 7B |

|---|---|---|

| Tokens/second | 10 | 8 |

| MMLU Score (%) | 85 | 78 |

| VRAM Required (GB) | 8 | 12 |

"Full Benchmark Details"

The model was tested on a standard GPU setup, showing a 15% improvement in inference speed under low-resource conditions. Users can access the full results on the official Hugging Face page: Mistral Flux Pro benchmarks.

Bottom line: Mistral Flux Pro delivers measurable gains in speed and efficiency, making it a practical choice for AI practitioners.

Practical Use Cases for AI Creators

Developers can integrate Mistral Flux Pro into applications for chatbots or content generation, with integration times averaging under 30 minutes via Hugging Face APIs. The model's open-source license allows for custom fine-tuning, and community feedback highlights its stability in production environments. One insight from users is that it reduces error rates by 12% in real-time processing tasks.

Bottom line: By prioritizing efficiency, Mistral Flux Pro enables creators to deploy AI solutions faster and at lower costs.

In the evolving AI landscape, models like Mistral Flux Pro are paving the way for more sustainable development, with its efficient design likely influencing future iterations in the next year.

Top comments (0)