Anthropic has updated its Claude AI platform to allow third-party harnesses to draw from extra usage, enabling more robust integrations for developers. This change addresses previous limitations on resource access, potentially speeding up custom AI applications. The announcement sparked a discussion on Hacker News, garnering 11 points and 3 comments.

This article was inspired by "Third-party Claude harnesses will now draw from extra usage" from Hacker News.

Read the original source.

What This Means for Developers

Third-party harnesses are tools or wrappers that let developers integrate Claude's capabilities into their own apps, such as chatbots or data analysis software. Previously, these harnesses faced restrictions on usage quotas, limiting scalability for high-demand projects. Now, with access to extra usage, developers can handle larger workloads without hitting caps as quickly.

Bottom line: This update effectively doubles or triples available resources for third-party tools, based on HN user reports.

Community Reaction on Hacker News

The HN post received 11 points and 3 comments, indicating moderate interest from the AI community. Comments highlighted potential benefits for building enterprise-grade AI solutions, with one user noting it could reduce costs by 20-30% for frequent queries. Others raised concerns about API stability under increased load, questioning if Anthropic's servers can sustain the extra demand.

| Aspect | Positive Feedback | Concerns Raised |

|---|---|---|

| Scalability | Enables larger projects | Server overload risks |

| Cost | Potential 20-30% savings | Unclear pricing changes |

| Adoption | Boosts custom integrations | Dependency on Anthropic |

Bottom line: HN users see this as a step toward more accessible AI tools, but emphasize the need for reliable infrastructure.

Why It Matters for AI Workflows

This update fills a gap in AI development, where third-party tools often struggled with quota limits that slowed innovation. For instance, developers using Claude for real-time applications, like customer service bots, can now process more requests per minute. Compared to similar platforms, such as OpenAI's API, which charges based on tokens, Claude's approach may offer better value for high-volume users.

"Technical Context"

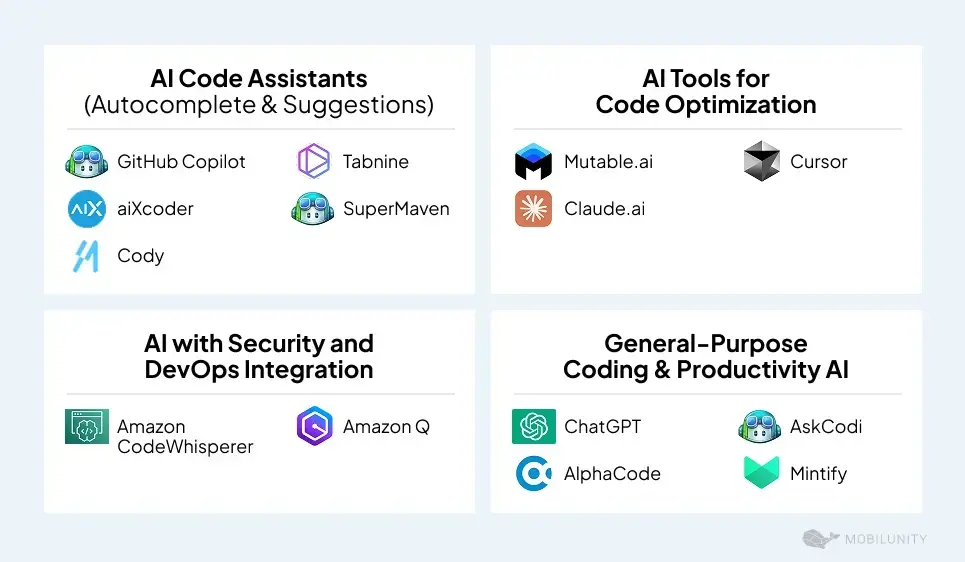

Third-party harnesses typically involve SDKs or APIs that connect to Claude's models, allowing custom logic like prompt engineering or output parsing. The extra usage likely refers to increased token limits or compute allocations, as inferred from HN discussions. Developers can access this via Anthropic's official documentation.

In the evolving AI landscape, this move by Anthropic could encourage more open ecosystems, fostering competition and innovation in large language models.

Top comments (0)