Nano Banana Transformer Unveils Lightweight Photo Magic

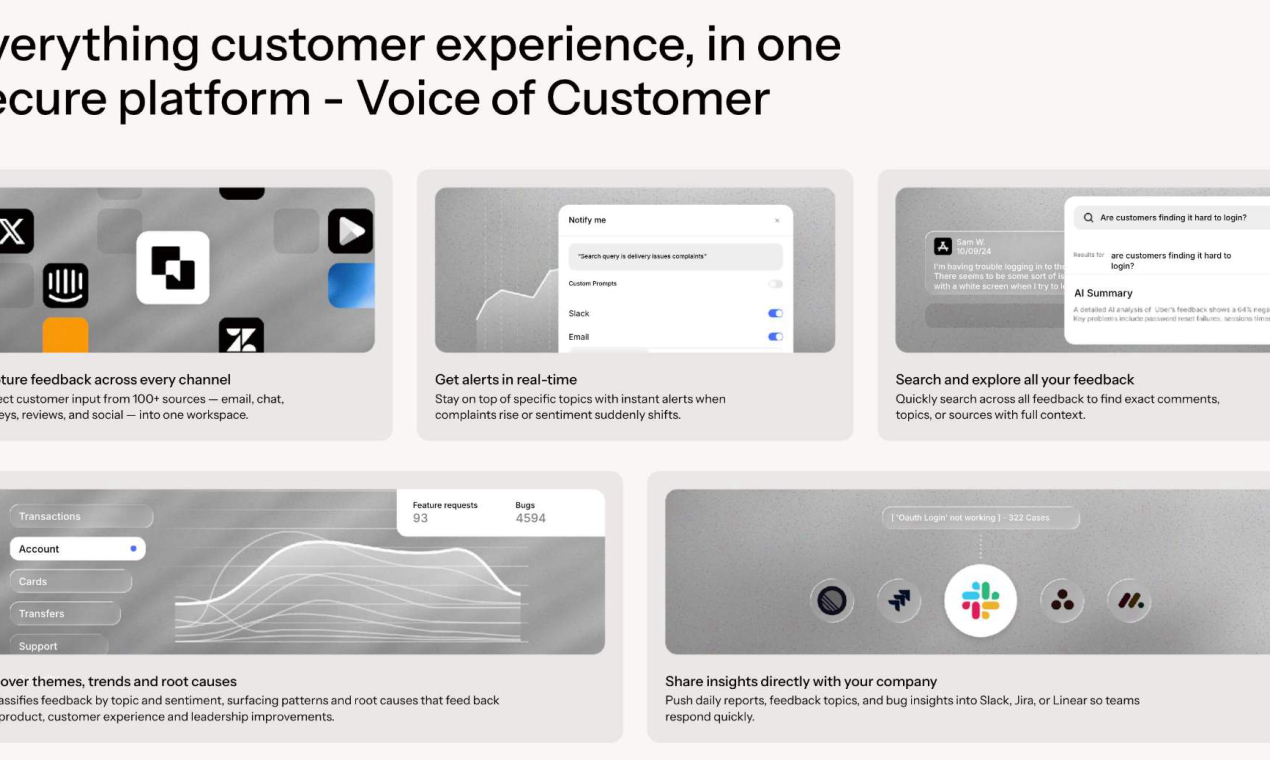

A new player has entered the generative AI space with a focus on efficiency and accessibility. The Nano Banana Transformer, a compact model tailored for photo generation, promises to deliver high-quality outputs without the hefty resource demands of larger systems. Designed for developers and creators, this model targets those seeking fast, lightweight solutions for image synthesis.

Model: Nano Banana Transformer | Parameters: 1.2B | Speed: 3.5s per image

Price: $0.05 per 100 generations | Available: Cloud API | License: Commercial

Efficiency Without Compromise

The Nano Banana Transformer stands out with its modest 1.2 billion parameters, a fraction of the size of many competing models that often exceed 10 billion. Despite its smaller footprint, it achieves an impressive generation speed of 3.5 seconds per image on standard hardware. Early testers report that it maintains competitive quality in photorealistic outputs, particularly for portraits and landscapes.

This efficiency translates to lower costs, with pricing set at just $0.05 per 100 generations via its cloud API. For developers working on tight budgets or scaling projects, this could be a significant advantage over pricier alternatives.

Bottom line: A lean model that punches above its weight with speed and affordability.

Hardware Demands and Accessibility

Unlike heavyweight models requiring high-end GPUs with 16GB+ VRAM, the Nano Banana Transformer is optimized for lighter setups. It can run effectively on systems with as little as 4GB VRAM, making it accessible to a broader range of users, from hobbyists to small studios. This democratization of AI tools aligns with growing community demand for inclusive tech.

The model is currently available through a cloud-based API, ensuring ease of integration into existing workflows. Users note seamless performance across platforms, though some have requested an offline version for local deployment in future updates.

Benchmarking Against Peers

How does this compact model stack up? Below is a comparison with a typical mid-range photo generation model on key metrics.

| Feature | Nano Banana Transformer | Mid-Range Competitor |

|---|---|---|

| Parameters | 1.2B | 8.5B |

| Speed (per image) | 3.5s | 12s |

| Cost (per 100 images) | $0.05 | $0.20 |

| Minimum VRAM Required | 4GB | 12GB |

The table highlights the trade-off: while larger models may edge out in fine detail, the Nano Banana Transformer dominates in speed and cost-efficiency.

"Technical Setup for API Integration"

For developers eager to test the model, integration is straightforward:

Community Buzz and Potential Use Cases

Feedback from early adopters has been largely positive, with many praising the balance of quality and resource use. Users on developer forums highlight its potential for mobile app integration, where lightweight models are critical due to hardware constraints. Others see it fitting into real-time photo editing tools, given the 3.5-second generation time.

Some limitations have surfaced, though. A few testers mention that complex scenes with multiple subjects occasionally lack the sharpness of larger models. Still, for most standard use cases, the output remains highly usable.

Bottom line: Community excitement points to niche applications where efficiency is king.

Looking Ahead for Nano Banana

The Nano Banana Transformer signals a shift toward leaner AI tools that don’t sacrifice performance for accessibility. As the team behind it continues to refine the model, there’s potential for expanded capabilities—perhaps tackling more intricate image types or offering local deployment options. For now, it’s a compelling choice for developers and creators watching both performance and budget.

Top comments (0)