Black Forest Labs introduced Nyx, a multi-turn, adaptive, and offensive testing harness designed to probe AI agents for vulnerabilities in real-time scenarios.

This article was inspired by "Show HN: Nyx – multi-turn, adaptive, offensive testing harness for AI agents" from Hacker News.

Read the original source.Tool: Nyx | Type: Testing harness | Features: Multi-turn, adaptive, offensive

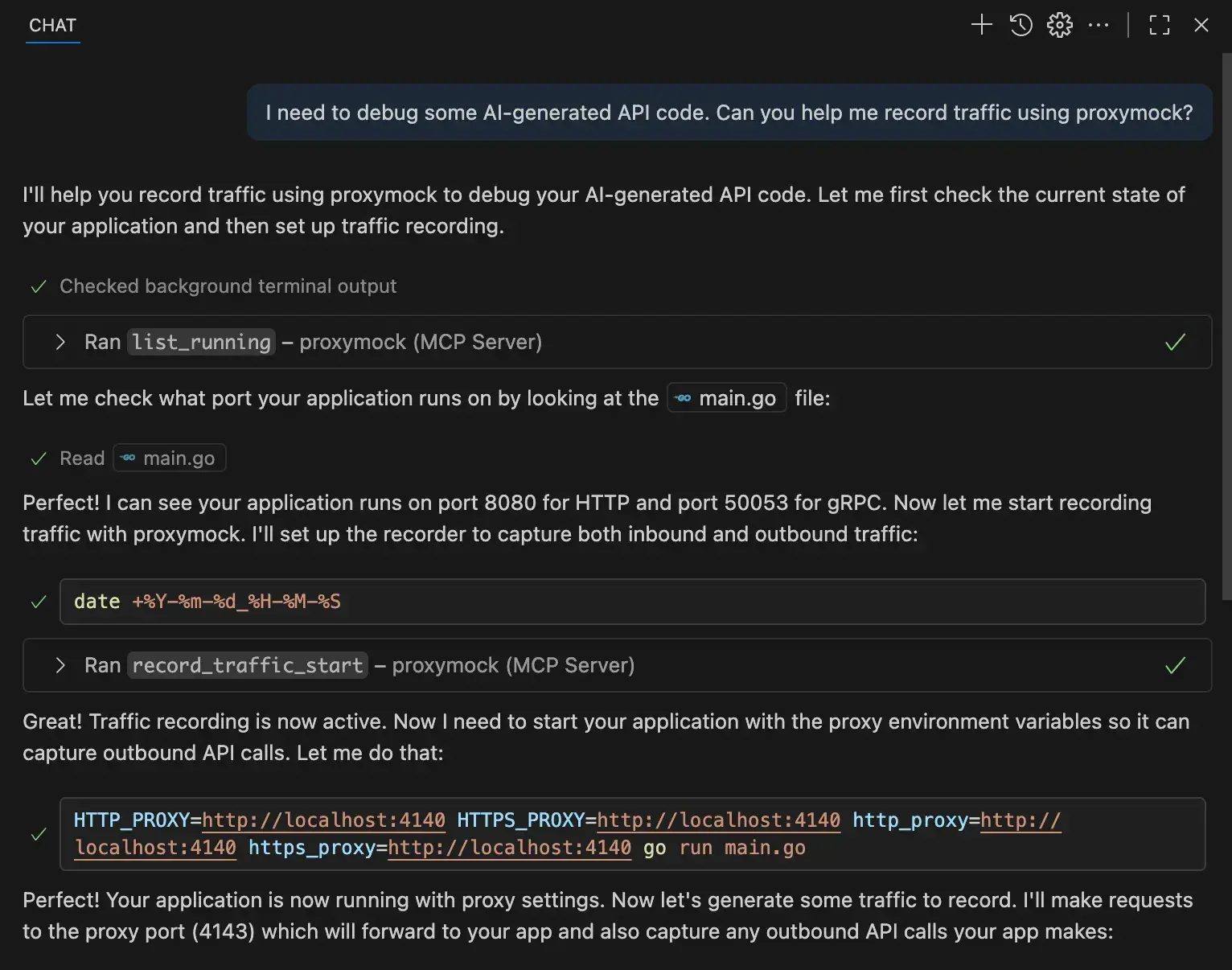

How Nyx Works

Nyx simulates multi-turn interactions to adaptively challenge AI agents, focusing on offensive tactics like adversarial attacks. The tool runs on standard hardware, allowing users to define custom scenarios for testing agent responses. In the HN post, Nyx handled sequences of up to 10 turns per test, identifying flaws in agent decision-making.

HN Community Feedback

The HN discussion received 20 points and 8 comments, indicating moderate interest. Comments praised Nyx for addressing AI security gaps, with one user noting it could simulate real-world exploits effectively. Critics raised concerns about potential misuse, such as in creating malicious agents, while others questioned integration ease.

Bottom line: Nyx provides a practical way to stress-test AI agents, potentially reducing security risks in deployment.

"Technical Context"

Nyx uses adaptive algorithms to evolve tests based on agent outputs, similar to reinforcement learning setups. Early users reported setup times under 5 minutes on a standard laptop, with tests completing in seconds per turn.

Why This Matters for AI Developers

Offensive testing tools like Nyx fill a gap in AI development, where agents often lack robust security evaluations. Traditional frameworks require 10-20 GB of VRAM for complex simulations, but Nyx operates with lower resources, making it accessible. For developers, this means faster iteration on agent safety, especially in high-stakes fields like finance or healthcare.

Bottom line: By enabling adaptive offensive tests, Nyx could standardize security practices, preventing issues seen in past AI failures.

In a field where AI vulnerabilities lead to real costs—such as data breaches costing companies an average of $4.45 million per incident—tools like Nyx pave the way for more resilient agent designs.

Top comments (0)