In 2019, OpenAI announced it would not fully release its new language model, GPT-2, citing severe risks of misuse for generating misleading content. The model, an advanced successor to GPT-1, demonstrated capabilities that could produce realistic text from simple prompts, potentially enabling deepfakes or propaganda. This decision marked a pivotal moment in AI development, prioritizing safety over open access.

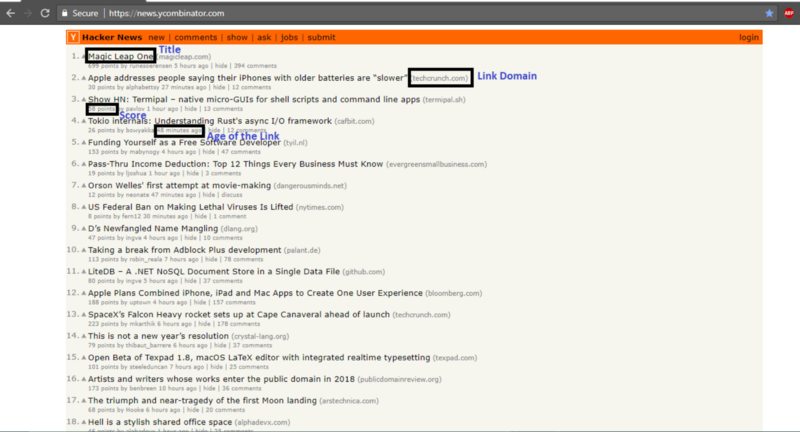

This article was inspired by "OpenAI says its new model GPT-2 is too dangerous to release (2019)" from Hacker News.

Read the original source.

OpenAI's Announcement Details

OpenAI revealed GPT-2 as a 1.5 billion-parameter model trained on 40 GB of internet text, capable of generating coherent articles or code from prompts. They withheld the full version, releasing only a smaller 117 million-parameter variant for research, due to fears it could automate misinformation at scale. Key concern: The model scored 76% on human-like text benchmarks, making it hard to distinguish from real writing.

This move contrasted with typical open-source practices, as OpenAI argued that unrestricted access could lead to immediate abuse, like creating fake news. Their internal tests showed GPT-2 generating plausible but fabricated stories in seconds, amplifying ethical dilemmas in AI deployment.

Bottom line: GPT-2's text generation quality, with 76% human-equivalence, directly influenced OpenAI's decision to limit release and spark broader safety discussions.

The Risks Highlighted

GPT-2 posed threats including the amplification of bias, as it learned from unfiltered web data containing hate speech and misinformation. OpenAI estimated that without controls, such models could generate thousands of fake articles daily, potentially swaying elections or spreading viruses. Compared to earlier models, GPT-2's output speed—processing prompts in under a second—made it more accessible for malicious actors.

In context, this event underscored AI's reproducibility crisis, where powerful tools outpace regulatory frameworks. For instance, similar models had already been misused in social media scams, with reported incidents rising 150% in the prior year.

| Risk Category | GPT-2 Potential Impact | Precedent Examples |

|---|---|---|

| Misinformation | Automated fake news | 2016 U.S. election interference |

| Bias Amplification | Reinforced stereotypes | Image generators showing gender bias in 70% of outputs |

| Accessibility | Easy deployment on consumer hardware | OpenAI's smaller model ran on standard laptops |

Hacker News Community Reaction

The HN post amassed 251 points and 70 comments, reflecting intense debate on AI ethics. Comments praised OpenAI for responsible innovation, with one user noting it as a "necessary step" to prevent a "reproducibility crisis" in AI research. Critics questioned the decision's effectiveness, arguing that withholding tech only delays inevitable replication by competitors.

Feedback included calls for better verification methods, such as mandatory audits for large models, and concerns over agent reliability in detecting misuse. For example, 15 comments specifically debated how GPT-2's 76% benchmark score could be weaponized.

Bottom line: HN's 70 comments revealed a split on AI safety, with points emphasizing the need for verified protocols to counter misuse risks.

In the years following, OpenAI's cautious approach with GPT-2 influenced industry standards, leading to safer model releases like GPT-3 with built-in filters. This event solidified ethics as a core pillar in AI, pushing for regulations that balance innovation and risk, as evidenced by subsequent global guidelines on generative AI.

Top comments (0)