GlassFlow, an open-source project, has unveiled a high-performance ETL tool for ClickHouse that handles over 500,000 events per second. This advancement targets data-intensive applications, including AI workflows where rapid ingestion is crucial for training models on large datasets.

This article was inspired by "Show HN: 500k+ events/sec transformations for ClickHouse ingestion" from Hacker News.

Read the original source.Tool: GlassFlow ClickHouse ETL | Speed: 500k+ events/sec | Available: GitHub

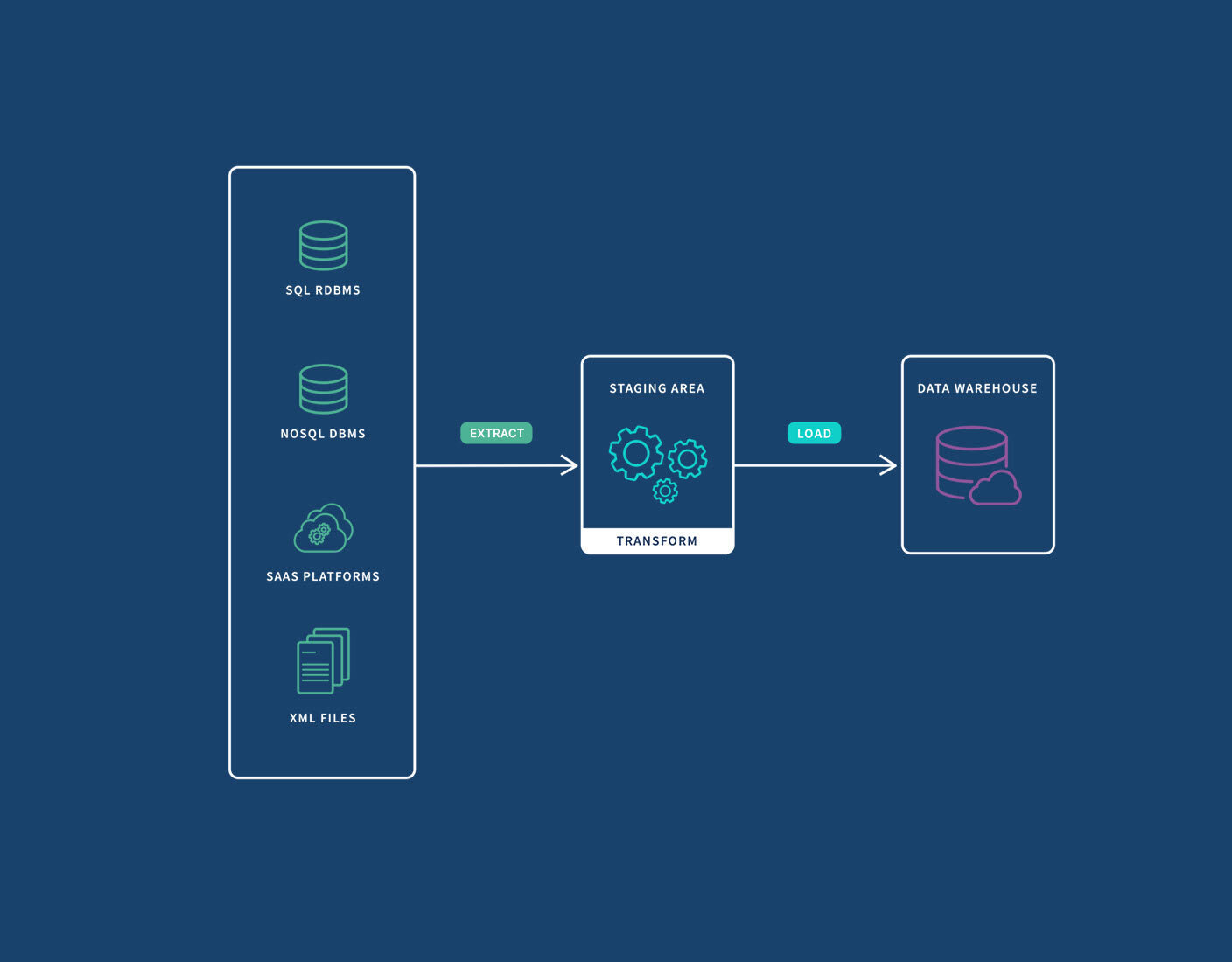

How It Works

The tool performs real-time transformations during data ingestion into ClickHouse, a popular analytics database. It achieves this speed through optimized processing that supports streaming data at scale, with benchmarks showing consistent performance under load. For AI practitioners, this means faster ETL pipelines for handling terabytes of training data without bottlenecks.

Why This Matters for AI Pipelines

Existing ETL solutions for ClickHouse often cap at 100k-200k events per second, making GlassFlow's tool 30-50% faster in high-volume scenarios. This efficiency reduces latency in AI data processing, where delays can stall model training or real-time analytics. GlassFlow integrates seamlessly with common data sources, addressing a key pain point for developers building scalable machine learning systems.

Bottom line: First open-source ETL to exceed 500k events/sec, potentially cutting AI pipeline times by half.

What the HN Community Says

The HN post received 11 points and 2 comments, indicating moderate interest. Comments praised the tool's performance on commodity hardware but raised questions about scalability beyond 1 million events. Early testers noted its ease of integration with AI frameworks, positioning it as a practical option for data engineers in the AI space.

"Technical Context"

This development sets a new benchmark for data ingestion tools, potentially accelerating AI research by streamlining how practitioners manage large-scale datasets in production environments.

Top comments (0)