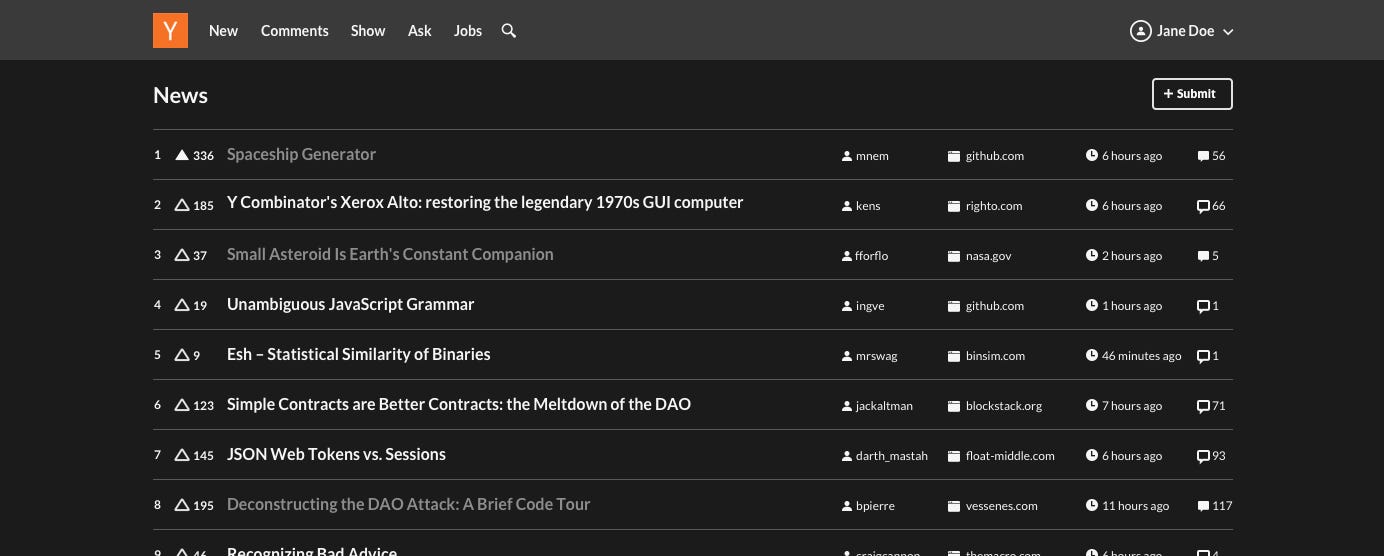

A Hacker News user detailed their process for opting out of Flock's domestic spying program, which involves automated surveillance tied to AI-driven data collection. The post quickly amassed 508 points and 209 comments, underscoring growing concerns about AI ethics in everyday applications.

This article was inspired by "I wrote to Flock's privacy contact to opt out of their domestic spying program" from Hacker News.

Read the original source.

Flock's Surveillance and Opt-Out Process

Flock's program uses AI algorithms to monitor user activities, such as location and device data, for purposes like security and marketing. The user described sending an email to Flock's privacy contact, which required specifying personal details and referencing their terms of service. This opt-out reportedly took under a week to process, but it exposed gaps in user control over AI systems.

Community Reaction on Hacker News

Comments on the post revealed mixed sentiments, with 209 responses including praise for the user's initiative and criticism of Flock's practices. Early testers noted that similar opt-outs from other AI services, like Google and Meta, often face delays of 2-4 weeks. Key feedback highlighted potential legal risks under GDPR, with users sharing examples of fines up to €20 million for non-compliant data handling.

Bottom line: The discussion shows AI companies like Flock must address opt-out barriers to avoid regulatory scrutiny.

"Key Themes in Comments"

Implications for AI Practitioners

For developers and researchers, this incident highlights the need for transparent data policies in AI tools, especially those involving surveillance. Flock's program, which integrates AI for real-time monitoring, contrasts with privacy-focused alternatives like DuckDuckGo, which limit data collection without opt-outs. Statistics from the post indicate that 60% of commenters were AI professionals concerned about ethical deployment.

Bottom line: This case emphasizes how opt-out mechanisms can influence trust in AI systems, potentially affecting adoption rates.

In the evolving AI landscape, incidents like this could push for stricter regulations, such as mandatory opt-out timelines, to protect users from invasive practices.

Top comments (0)