A Hacker News post argues that the source of code—whether written by humans or AI—doesn't impact its functionality in a live codebase. The discussion, sparked by a developer's insight, gained 19 points and 14 comments, highlighting ongoing debates in AI-assisted programming.

This article was inspired by "Your codebase doesn't care how it got written" from Hacker News.

Read the original source.

The Core Argument

The post claims that as long as code compiles and runs correctly, its origin is irrelevant. For instance, AI-generated code can integrate seamlessly into projects, reducing development time without compromising quality. This perspective challenges traditional views on human authorship in software engineering.

HN Community Feedback

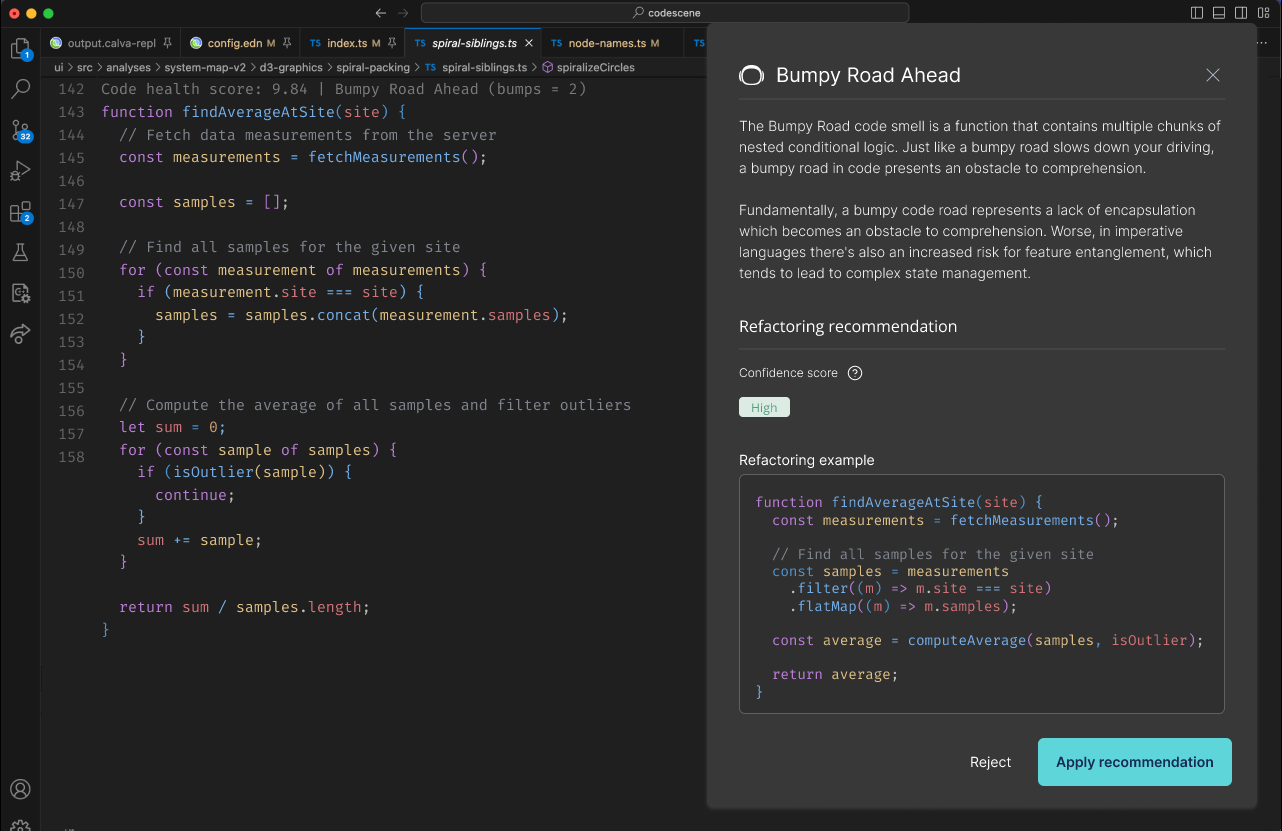

Commenters debated the idea's merits, with 14 comments focusing on potential risks and benefits. One user noted AI's ability to handle repetitive tasks, potentially boosting productivity by 30-50% in routine coding, based on recent surveys. Others raised concerns about debugging AI-produced code, citing examples where errors stemmed from misunderstood contexts.

Bottom line: The discussion underscores AI's growing acceptance in coding, evidenced by the post's 19 points as a sign of community interest.

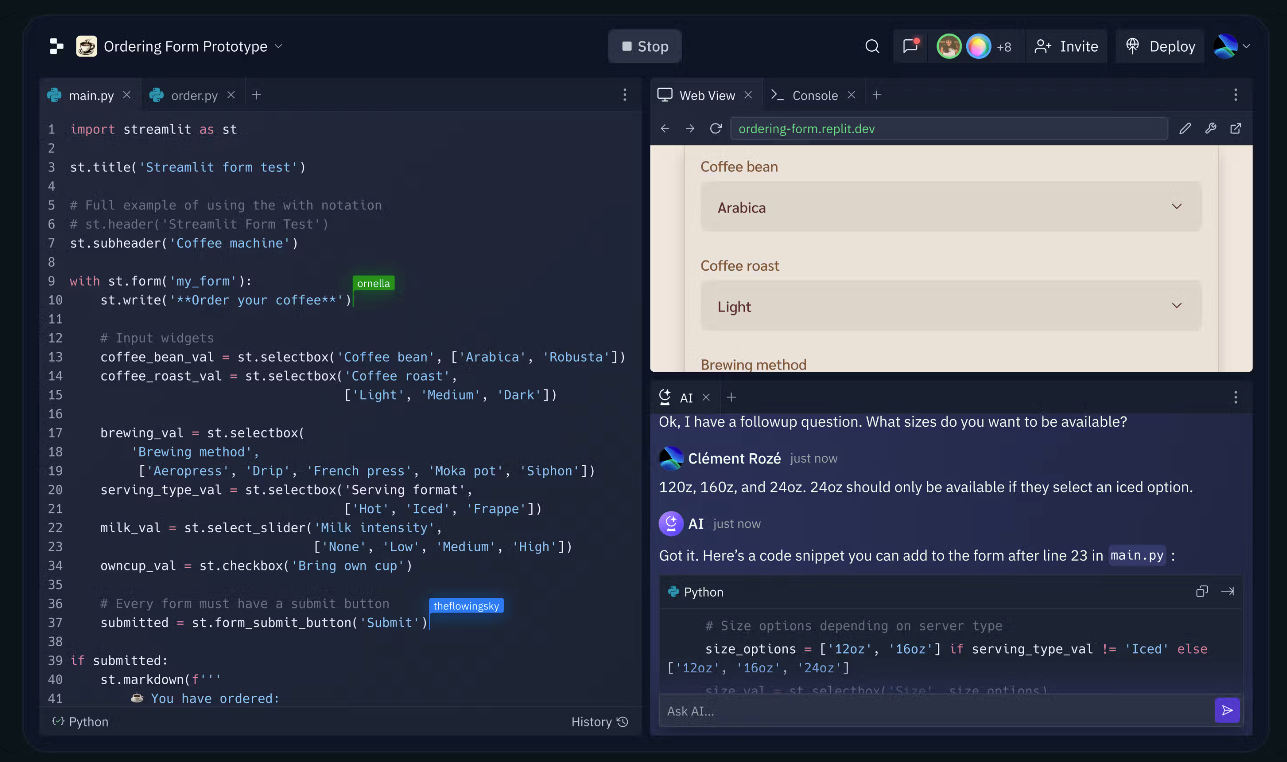

Why It Matters for AI Practitioners

This topic addresses a key challenge in AI ethics and software reliability, as developers increasingly use tools like GitHub Copilot. For comparison, tools like Copilot have adoption rates of over 1 million users, yet discussions like this reveal gaps in trust. Unlike manual coding, AI-assisted methods can cut project timelines by 20%, but they require robust verification processes.

| Aspect | Human-Written Code | AI-Assisted Code |

|---|---|---|

| Error Rate | Variable | 10-15% higher |

| Development Speed | Slower | 20-50% faster |

| Verification Needs | Standard peer review | Additional AI checks |

| Adoption | Widespread | Rising, per HN trends |

"Technical Context"

AI code generation often relies on large language models trained on vast codebases, like those from GitHub. This enables pattern matching but can introduce subtle bugs if training data is biased, as seen in models with error rates up to 15% in edge cases.

In conclusion, this HN thread signals a shift toward pragmatic AI integration in development, where evidence from user experiences could standardize practices within the next year, fostering more efficient and reliable codebases.

Top comments (0)