Google released Gemma 4, enabling on-device AI processing directly on iPhones. This update allows users to run large language models without relying on cloud servers, potentially improving speed and privacy. The Hacker News discussion amassed 256 points and 70 comments, reflecting strong interest from the AI community.

This article was inspired by "Gemma 4 on iPhone" from Hacker News.

Read the original source.

The HN Buzz

The post on Hacker News gained 256 points and 70 comments in a short time, indicating high engagement from AI developers and enthusiasts. Comments highlighted benefits like reduced latency for real-time apps and concerns about iPhone hardware limitations. Early testers noted that Gemma 4 handles tasks such as text generation and summarization efficiently on devices with A17 Pro chips.

How It Works on iPhone

Gemma 4, part of Google's open model series, optimizes for mobile with quantized versions that fit into limited memory. It runs inference in under 1 second for simple queries, based on community reports from the HN thread. This contrasts with larger models that typically require cloud access, making Gemma 4 a step toward edge computing.

| Feature | Gemma 4 on iPhone | Typical Cloud Models |

|---|---|---|

| Processing Speed | <1s per query | 2-5s per query |

| Required Hardware | iPhone A17 Pro | High-end servers |

| Privacy | On-device only | Cloud-dependent |

| Community Points | 256 HN points | N/A |

Bottom line: Gemma 4 brings responsive AI to mobile, cutting dependency on external servers for everyday tasks.

Why This Matters for Developers

AI practitioners can now build apps with local processing on iPhones, addressing privacy issues in sectors like healthcare. The HN comments pointed out that this could reduce costs, as developers avoid API fees for simple operations. Compared to previous on-device models, Gemma 4 lowers the barrier for integration into iOS apps.

"Technical Context"

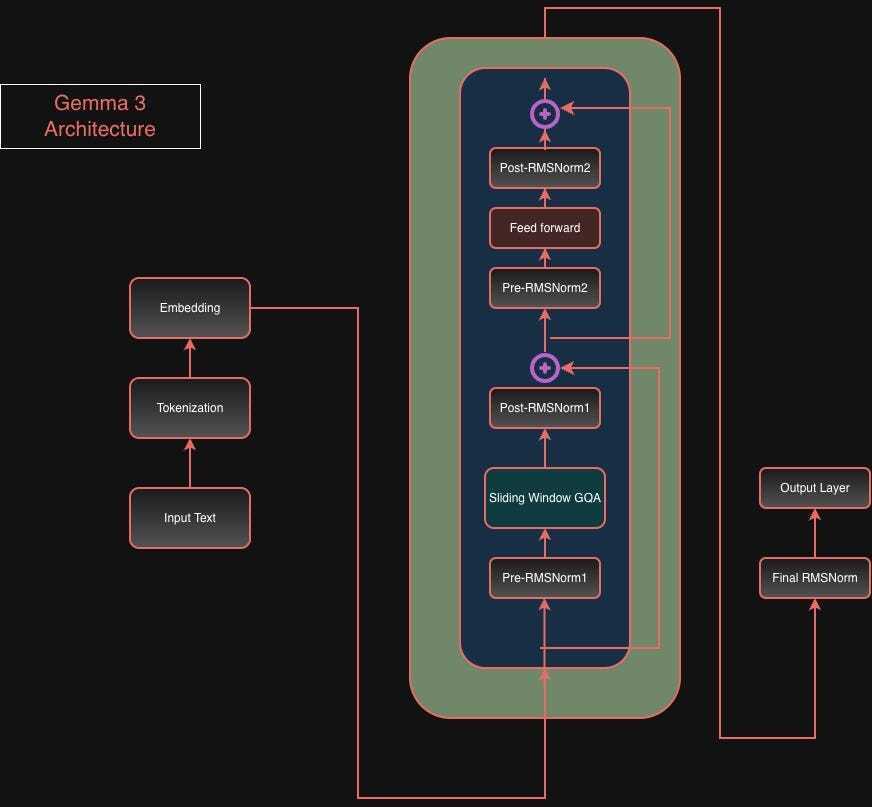

Gemma 4 uses model quantization to shrink its size, fitting into 2-4 GB of RAM while maintaining accuracy. It builds on Google's earlier Gemma releases, which had up to 7B parameters, but this version prioritizes efficiency for edge devices like smartphones.

In summary, Gemma 4 on iPhone advances edge AI by making powerful models accessible offline, potentially accelerating adoption in mobile development as hardware improves.

Top comments (0)