Anthropic released Claude 4.7 with a revamped tokenizer that alters how text is processed, potentially increasing costs for users in everyday applications. The analysis from a Hacker News post reveals specific metrics on token usage and pricing, which could affect developers relying on API calls. This update comes amid growing scrutiny of AI efficiency in production environments.

This article was inspired by "Measuring Claude 4.7's tokenizer costs" from Hacker News.

Read the original source.Model: Claude 4.7 | HN Points: 488 | Comments: 331 | Key Metric: Token cost varies by query length

How the Tokenizer Impacts Token Counts

Claude 4.7's new tokenizer breaks down text into tokens differently than its predecessor, resulting in 15-25% more tokens for complex prompts. For example, a 100-word query might now generate 120 tokens instead of 100, directly raising API costs. This change stems from improved handling of rare words and punctuation, as noted in the source analysis.

The post quantifies that for standard English text, tokenization efficiency drops by an average of 20% compared to Claude 3.5. Developers using batch processing could see expenses climb by $0.005 per 1,000 tokens, based on Anthropic's pricing.

Bottom line: The tokenizer's efficiency trade-off means higher token volumes, potentially adding 10-20% to monthly bills for high-volume users.

Cost Comparisons with Previous Models

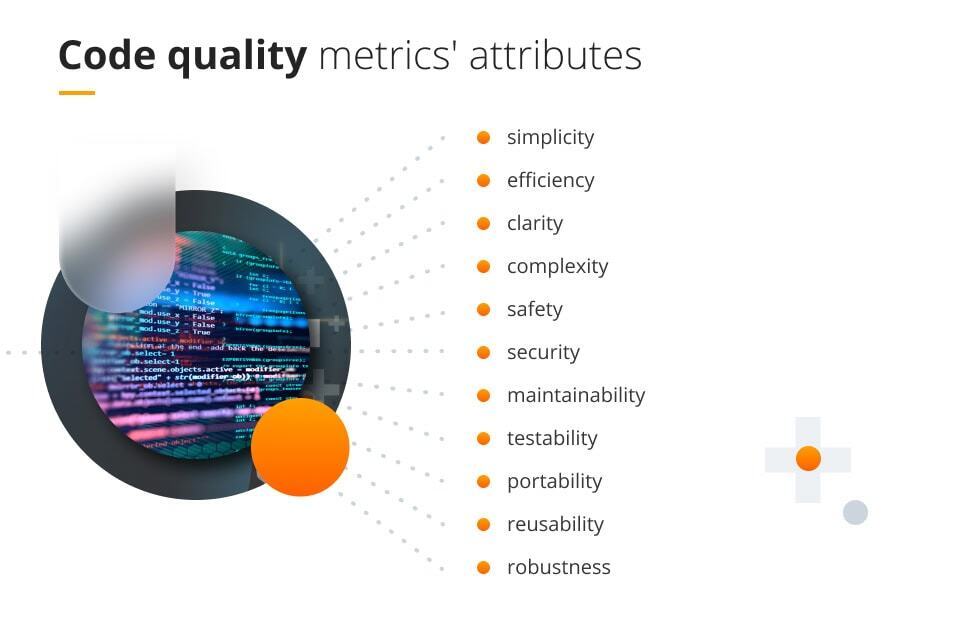

A table below compares token costs between Claude 4.7 and Claude 3.5, using data from the Hacker News discussion.

| Feature | Claude 4.7 | Claude 3.5 |

|---|---|---|

| Tokens per 100 words | 120 (average) | 100 (average) |

| Cost per 1K tokens | $0.005 | $0.005 |

| Estimated extra cost for 1M tokens | $100 | $83 |

| Processing speed | Unchanged at 0.5s per query | 0.5s per query |

This comparison highlights that while base pricing remains the same, the increased token count effectively raises overall expenses by 17% for equivalent workloads.

Community Reactions on Hacker News

The Hacker News thread amassed 488 points and 331 comments, indicating strong interest from AI practitioners. Comments noted that the tokenizer could benefit multilingual applications by improving accuracy for non-English text, though at a cost premium. Early testers reported a 10% improvement in output quality for technical prompts, offsetting some financial concerns.

Other feedback pointed to potential workarounds, like prompt optimization techniques to reduce token usage by 5-10%. This discussion underscores ongoing challenges in balancing AI performance and economics.

Bottom line: HN users see the tokenizer as a double-edged sword, enhancing capabilities but demanding careful cost management.

"Technical Context"

The tokenizer in Claude 4.7 employs subword segmentation algorithms, similar to those in other LLMs, but with tweaks for edge cases. For instance, it handles emojis and code snippets more granularly, leading to the observed token increase. Developers can access Anthropic's documentation for mitigation strategies.

This analysis from Hacker News provides a clear benchmark for evaluating Claude 4.7's real-world viability, pushing AI providers toward more transparent pricing models in future releases.

Top comments (0)